How Many Megapixels Do You Actually Need?

![]()

Camera resolution in the early 2000s was a space race to the biggest and best. Nikon ushered in the beginning of the end — with the release of the 36-megapixel era-defining D800 — to what became the resolution doldrums.

But how many pixels does a photographer actually need?

The Role of Resolution in Photography

Working with analog cameras resulted in a nuanced sense of resolution: everyone started with the same blank canvas and could — so to speak — sketch in as much detail as was physically possible. In practice, that meant the careful selection of the film to be used based upon technical considerations (e.g. degree of grain or film speed) that sat alongside a certain “look” you might want to achieve (e.g. a preference for Velvia over Ektachrome). The chemical wizardry that produced these results was left up to the experts, although with black and white you had a little more latitude in your own darkroom.

The change to digital altered the relationship between photographer, camera, and image. Photons are photons and the image sensor simply counts them: what happens after the fact is post-production, be that in-camera or in your image editing software. That innate understanding of color and contrast still sat with the film companies, particularly where they manufactured cameras.

The demise of Kodak meant that Fuji assumed the sole mantle in this space, something it’s been happy to push with a range of film simulation modes. This was particularly relevant where processing power and memory storage were at a premium (AKA “should I shoot JPEG?”), but the proliferation of smartphones, and specifically image filters, has led to the expectation of one tap experimentation (which obviously means the answer to the previous question is “no”).

The divorce of the physical medium (film) from the physical output (print) has naturally led to needing to know what resolution an image (or sensor) actually needs to be, and the starting point to understanding this question is deciding what your finished product will be. This is critical because ultimately a real physical print has a range of guiding parameters that largely control how it is perceived by a real physical eye.

These parameters vary depending upon whether you are shooting to print a photo book, acrylic, magazine cover, or billboard. The variation in size, print media, and print techniques — and, yes, resolution — combine to deliver the final product.

Calculating Resolution for Printed Photos

In some senses, the answer to the resolution question is deceptively simple: as many pixels as you need to see. However, there is a more complex backdrop to actually providing some numbers to flesh out “as many as you need to see.”

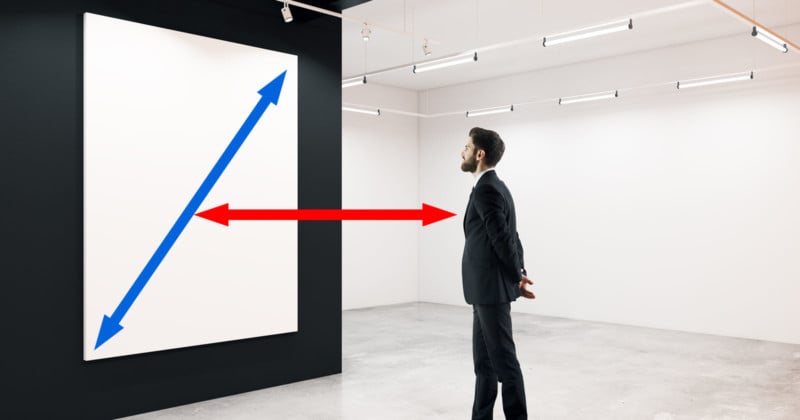

The starting point to this understanding is to remember that we are talking about the human eye and a physical print, which means having a basic notion of the distance at which the image will be viewed. Are you printing for a billboard or a photobook? The closer you are, the smaller the image needs to be or, conversely, the bigger the image, the further away you can be. It’s an obvious point, but how often do you pause to consider the viewing distance?

As a rule of thumb, the image diagonal should be about half to two-thirds of the viewing distance. Perhaps more helpfully, the viewing distance should be 1.5 to 2 times the image diagonal. If you produce an A4 print, the image diagonal is about 14 inches which equates to a 28-inch viewing distance or about 2.5 feet, which seems quite common in galleries.

Of course, that is only one-half of the equation (image size) and doesn’t tell you how many pixels you need. The second half is consideration of the pixels per inch (PPI) required; how many pixels should be used to fool the eye into seeing a continuous tone for the viewing distance specified? In this instance, there is an answer to life, the universe, and everything. It’s 3438, or more specifically 3438/viewing distance (in inches).

3438 is the Magic Number for Calculating PPI

Taking our example above: 3438/28=122 PPI. For our A4 print that equates to a surprisingly low 1500×1000 or 1.5 megapixels. There are two other common edge cases. With a 6×4 you may view it about 12 inches away which leads to 286 PPI and so the common recommendation for printing at 300ppi (and requiring a 2-megapixel image). For an A1 canvas viewed 4 feet away, the PPI would be exactly 72 with a minimum image size of 4 megapixels.

The science behind the numbers is based upon the visual acuity of the human eye. For someone in good health, the resolution of the eye is 1 arc minute (0.000290888 radians) of angle which, after some high school trigonometry, gives us the following general formula:

If you would like to calculate viewing distance in the metric system, then the magic number is 8595.

If you stick to this two-step process — estimate the viewing distance and calculate the required PPI — then you will have a good idea of the resolution needed to achieve the results you want.

Do We Need More Resolution?

What’s surprising about the above calculations is actually how little resolution you actually need; if you want that A1 canvas, which measures 23.39×33.11in (59.4×84.1cm), then just shoot 4-megapixel images. The obvious conclusion to this number is that you should shoot at a lower resolution (maybe 6 megapixels), although you can only do this for JPEGs (Nikon cameras have long allowed you to choose FX or DX modes, but that then changes the effective focal length).

However, resolution is important for two key reasons. Firstly, it provides latitude in your workflow. For example, if your image is slightly askew then straightening it will require cropping, which inevitably means lost pixels.

Perhaps more importantly, you have the option for cropping per se as part of the post-production workflow. Photographers have always done this, but the advent of high-resolution sensors means that you ultimately don’t lose any detail from the printed output. The more pixels you have, the more choices you have in how you crop in your creative output or, indeed, multiple realizations.

The second point is more pertinent in the digital age and is perhaps a result of it. People want to see more detail. In an age in which we are used to “pinch and zoom,” there appears to be a desire to use that apparent infinite ability to zoom to see more and more in a way that reminds me of the famous 1977 film Powers of Ten.

In the analog photographic world, this is perhaps best illustrated through the work of German photographer Andreas Gursky who is famous for his incredibly detailed images of architecture and landscapes. And for selling incredibly expensive photos as well… Rhein II went for $4,338,500 in 2014.

Produced as a 143×73 inch (3.6×1.9m) chromogenic print mounted behind acrylic, it looks flat, perhaps even boring. Yet that’s not the point; Gursky has pursued the “hyper-real” image and in this sense, you probably need to see it in the flesh to appreciate how all-encompassing it is. It’s undoubtedly designed to overwhelm your senses (Gursky describes his process to The Guardian).

Digital Photo Viewing and the Megapixel Wars

As photographers, we can be criticized as pixel peepers, yet maybe this is an innate desire which is reflected in the growth and popularity of gigapans. You just have to see the interest generated by the likes of the 717-gigapixel photo of The Night Watch. Likewise, the success of Google Earth is in part predicated on the same fascination. In the photographic space, ZoomHub – the open-source successor to Microsoft’s ZoomIt – uses DeepZoom to smoothly zoom in and out of high resolution images.

So the next time you create a high-resolution panorama or shoot with a 100-megapixel back, try creating a ZoomHub image for display online and indulge your pixel-peeping inner self (and that of others). This indulgence may also point to a desire for even higher resolution sensors.

I hope that the Leica M11 is a marker for the beginning of a new megapixel space race…

Image credits: Stock photo licensed from Depositphotos