Photographers Need to Stop Worshiping Dynamic Range

Photography has always had a weakness for metrics, but dynamic range has taken on a peculiar authority in the digital era. It is treated not just as a specification, but as a verdict. Cameras are ranked, dismissed, or praised based on differences of less than a stop, as if such a number alone could determine the quality of an image.

The appeal is obvious. Dynamic range is measurable, repeatable, and grounded in real physics. It’s uniquely objective in a medium that is otherwise frustratingly subjective. But that is exactly why it has become overvalued. When a complex system can be reduced to a number, the number tends to take over the conversation.

The problem is not whether dynamic range matters. It does. The problem is that it has been elevated far beyond its actual role in photography.

What Dynamic Range Really Is (and Isn’t)

At its core, dynamic range is the ratio between the strongest signal a sensor can capture before saturating (full well capacity) and the weakest signal it can distinguish from noise (read noise). Expressed in stops, it is simply a logarithmic description of that ratio.

![]()

This definition is important because it reveals something that is often overlooked: dynamic range is not a single “thing” that engineers can dial up at will.

Even if most of the equation is Greek to you, you can probably tell that DR is the result of competing constraints — increasing full well capacity improves highlight headroom but is tied to pixel size and physical structure. Reducing read noise improves shadow performance but quickly runs into diminishing returns as fundamental noise sources — like photon shot noise and dark noise — begin to dominate.

In other words, dynamic range is bounded not just by engineering, but by physics.

And, crucially, it is not a measure of image quality in any holistic sense. It does not describe color accuracy, tonal rendering, microcontrast, noise character, or any number of other critical metrics. It describes only a very specific ratio of values in a scene.

The Real Breakthrough Already Happened

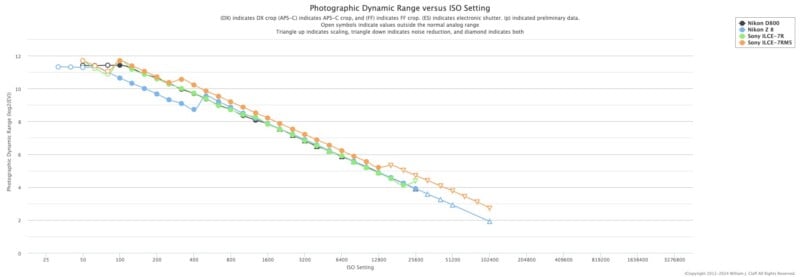

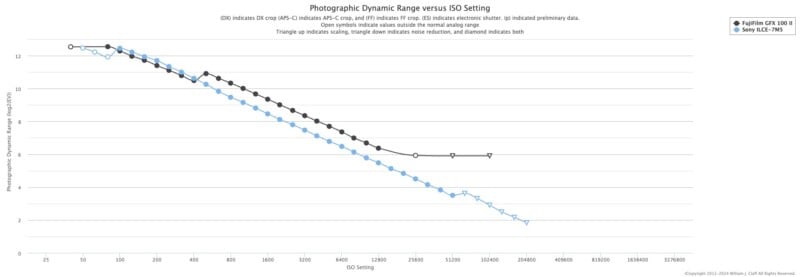

The modern fixation on dynamic range is rooted in a genuine technological shift. I’d argue that shift came with the Nikon D800/D800E/D810 and Sony a7R. The 36MP sensor in those cameras introduced dramatically lower read noise at base ISO, enabled by advances in on-chip ADC design, improved signal pathways, and more efficient analog processing.

The result was what photographers experienced as a kind of liberation: shadows could be lifted aggressively without catastrophic noise penalties. Exposure became more forgiving. Highlight protection became easier to prioritize without sacrificing detail in the shadows. RAW files felt elastic in a way they hadn’t before.

That was a real revolution.

But revolutions do not continue indefinitely. Measured data show that subsequent improvements in DR have been incremental. The Nikon D800 (2012) and Sony a7R (2013), compared with the Nikon Z8 (2023) and Sony a7R V (2022), cluster tightly in measured photographic dynamic range, indicating that gains have been marginal refinements rather than step changes. That’s a difference of roughly a decade in sensor design, yet effectively zero dynamic range benefits have materialized.

This matters because it changes the number’s significance. When dynamic range was increasing dramatically, it changed photographic practice. Now that the numbers have plateaued, additional gains rarely follow.

The Physics of the Plateau

The slowdown in dynamic range improvement is not due to stagnation. It is due to limits.

Modern sensors already operate with extremely low read noise — often on the order of a few electrons. At that level, further reductions yield diminishing returns because other noise sources, particularly shot noise, begin to dominate. Photon shot noise is a completely independent variable; try as we might, we cannot eliminate the randomness inherent in light itself.

On the highlight side, full well capacity is constrained by pixel geometry. Larger pixels can store more charge, but increasing pixel size reduces resolution — something the market has consistently resisted. Engineers can improve efficiency, deepen photodiodes, and refine micro-lens structures, but these are incremental optimizations, not transformative changes.

Even the role of bit depth is often misunderstood. Increasing ADC precision beyond the noise floor does not increase usable dynamic range. It simply samples noise more finely. The effective DR is determined by signal-to-noise ratio, not by how many bits are used to encode it. Even with high bit-depth ADCs (e.g., 14-bit or higher), the effective dynamic range is limited by noise, not quantization. Increasing bit depth beyond the noise floor does not increase usable DR; it only oversamples noise.

This is why 14-bit vs 16-bit discussions often miss the point: if the noise floor is already above the least significant bit, additional bits do not add real information. This is why we see almost no difference between 14-bit and 16-bit modes on Fujifilm GFX cameras, even in lab settings.

Taken together, these constraints explain why dynamic range has flattened into small, hard-won gains. The easy improvements are gone. What remains is refinement.

The Misunderstanding of Usable Dynamic Range

Not all dynamic range is equally usable.

Lab measurements often define DR at a specific signal-to-noise ratio (SNR), such as SNR=1. But an SNR of 1 is barely distinguishable from noise. In practice, photographers require higher SNR thresholds (e.g., SNR=3 or SNR=5) for visually clean results.

This means that the effective dynamic range in real-world images is often smaller than the headline number.

Additionally:

- Shadow recovery amplifies noise non-linearly

- Color fidelity degrades faster than luminance SNR

- Fixed-pattern noise and banding can emerge under extreme pushes

- DR numbers tell you nothing about tonal quality

- Not all noise is aesthetically equal

So while a sensor may measure 14+ stops in a lab, the number of aesthetically usable stops is lower — and more importantly, often already sufficient.

Most Scenes Are not Pushing the Limits

One of the most persistent misconceptions in the DR debate is that photography is constantly operating at the edge of sensor capability. In reality, most scenes fall well within the dynamic range of any modern interchangeable lens camera.

An overcast day might span six to eight stops. Indoor scenes often fall below that. Even many outdoor scenes rarely exceed ten or twelve stops unless they involve extreme contrast, such as direct sunlight paired with deep shadow.

Modern full-frame sensors already capture roughly thirteen to fifteen stops under ideal conditions. That means, in practice, many scenes are fully contained within the sensor’s capabilities. Others can be managed with modest exposure decisions or simple compromises.

This shifts the burden away from the sensor and onto the photographer. The question becomes less about whether the camera can handle the scene and more about how the photographer chooses to interpret it.

Output Dynamic Range

Perhaps the most overlooked aspect of the entire discussion is this: even if your camera can capture enormous dynamic range, you cannot display most of it.

Every output medium imposes its own limits.

A typical display — what most people use on phones, laptops, and standard monitors — can reproduce roughly six to eight stops of dynamic range in a perceptual sense. Even high-end emissive displays with large contrast ratios are still far below what modern sensors can capture. HDR displays expand this somewhat, but they are not yet universal, and their rendering depends heavily on tone mapping.

Print is even more constrained. A high-quality photographic print might achieve a dynamic range equivalent of around six to seven stops, depending on paper type, ink, and viewing conditions. Matte papers compress contrast further; glossy papers extend it slightly, but still nowhere near sensor-level DR.

In practice, this means that every image is compressed. Tone mapping is unavoidable. The photographer must decide what to keep, what to emphasize, and what to sacrifice. The idea that capturing more DR automatically results in a “better” final image ignores the fact that most of that range will never be seen. (This is certainly not to say that more dynamic range doesn’t allow for more flexibility with tone-mapping — it certainly does)

In other words, dynamic range is not just a capture problem. It is a translation problem.

And in that translation, judgment matters more than headroom.

The Illusion of “Better Files”

There is a subtle but important distinction between a file that is more flexible and a file that is better.

High dynamic range produces files that tolerate aggressive editing. Shadows can be lifted, highlights can be mapped to have smoother roll off, and exposure can be adjusted with less penalty. This flexibility is valuable, especially in difficult conditions.

But flexibility is not inherently aesthetic. A file that can survive extreme manipulation is not necessarily a file that looks better when handled normally. In fact, sensors optimized for maximum DR often produce flatter tonal responses, requiring more deliberate shaping in post. If you don’t know how to manipulate such a file, then it may be more of a hindrance than anything else.

A photograph is not judged by how much abuse it can withstand. It is judged by its appearance.

This is where the obsession begins to distort priorities. It encourages photographers to value recoverability over intentionality, to shoot defensively rather than decisively, and to treat post-processing as a rescue operation rather than a refinement.

![]()

This inversion leads to poor decision-making. Photographers optimize for recoverability rather than intentionality. They expose defensively instead of deliberately. They evaluate cameras based on hypothetical failure scenarios rather than actual use.

Where Real Gains Might Still Come From

Despite the plateau, dynamic range is not a closed chapter. There are still areas where meaningful improvements could occur, though they are unlikely to produce dramatic leaps.

One of the most promising directions is the continued refinement of multi-gain sensor architectures. Most modern sensors already use dual conversion gain (sometimes referred to as “dual native ISO”) — first seen in the Aptina sensors in the Nikon 1 series of cameras — to optimize performance at higher ISOs.

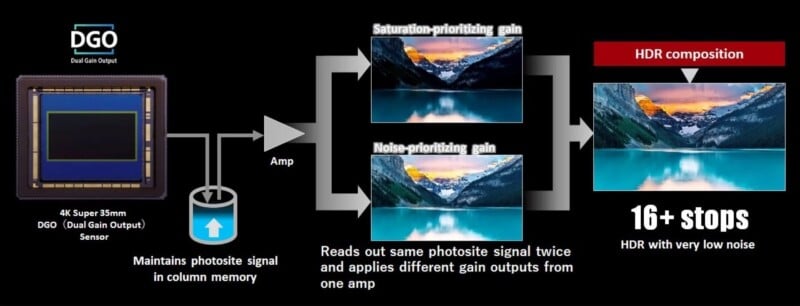

We’ve started seeing some more advanced implementations in recent time, where two separate amplifications are combined by sampling electrons twice with high and low conversion gains (known as dual output gain). This technology has been extremely useful in the video arena for quite a while — Arri’s Alexa ALEV sensors and Canon’s DGO (“Dual Gain Output”) sensors being two notorious examples. But for stills, we didn’t see dual output gain until 2022 with the Panasonic GH6 (when using its DR Boost mode). More recently, the Sony a7 V and Panasonic S1 II made headlines for their innovative use of the technology.

Another avenue lies in improving full-well capacity without increasing pixel size. This could involve deeper photodiodes, better charge isolation, or more efficient use of the silicon volume. These are difficult engineering problems, but they target the highlight side of the DR equation, which has seen less dramatic improvement than shadow noise.

Stacked sensor designs and improved on-chip processing may also contribute. By separating photodiodes from processing layers and enabling faster, cleaner readout, these architectures can reduce downstream noise and preserve more of the original signal. Over time, this could translate into modest but meaningful DR gains.

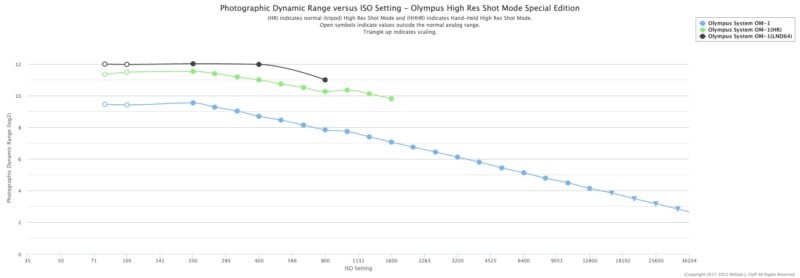

There is also the possibility of more sophisticated analog-domain processing — essentially doing more of the “thinking” before the signal is digitized. If noise can be reduced or managed earlier in the pipeline, the effective dynamic range can improve without requiring fundamental changes to pixel design. In-camera computational features are perhaps the most promising — multi-shot HDR fusion (like we see in smartphones) is inching closer every day, especially with the widespread adoption of stacked and semi-stacked sensor designs. Features like Live ND in the Olympus/OM System Micro Four Thirds cameras — which mimics long exposures by stacking photos — or HHHR (Handheld High Resolution) dramatically increase dynamic range, for example.

None of these approaches is simple. All involve trade-offs in cost, complexity, power consumption, and heat. And all are subject to the same underlying limits of physics.

Reframing the Priority

The central issue is not that dynamic range is unimportant. It is that it has become disproportionate. Internet forums and comments sections bash “Camera A” for its half-stop-lower DR compared to “Camera B,” while ignoring the dozens of other differences that actually make a visible impact on the end result.

Once a camera provides enough dynamic range to handle the scenes you encounter — which most modern cameras do — the marginal gains cease to be decisive. At that point, other factors take over: how the camera handles, how reliably it focuses, how the files respond to your workflow, how the system fits your way of working, whether the system offers the lenses you need, etc.

More importantly, the photographer’s own decisions become the limiting factor. Light selection, composition, timing, and interpretation matter far more than whether the sensor offers another fraction of a stop in shadow recovery.

The obsession with dynamic range persists because it offers clarity in a medium that resists it. It turns photography into a measurable contest, where differences can be plotted and ranked. But that clarity is misleading. What remains is a gap between what cameras can record and what photographs actually use.

Closing that gap is not a technical problem. It is a creative one.