Image Sensors: The Main Battleground of the Camera Industry

![]()

Manufacturing silicon is patently not required in order to make cameras — anyone can put together a pinhole model — however, the wider point is more pertinent. To be a competitive, global, manufacturer, do you need to make the sensors that actually go into camera bodies?

The History of Camera Manufacturing

The camera manufacturing business has its origins in the precision engineering sector that made and assembled mechanical marvels. Anyone that has held — and used — a film Leica will have marveled at the sheer kinaesthetic pleasure in handling it, along with the joy and assurance of loading a film, winding it on, and taking a shot.

Of course, camera bodies are only half of the equation, with lenses making up the other key element. In fact, the importance of lenses cannot be underestimated and is one of the reasons for Leica’s success as well as the likes of Nikon and Canon. Those that made cameras often bought lens manufacturers (like Bronica), while those that made optics and, subsequently, lenses went on to become camera manufacturers (such as Minolta).

Cameras and lenses are symbiotically conjoined and while there are undoubtedly outstanding lens manufacturers (like Zeiss and Sigma), own-brand lenses are best placed to make the most of the characteristics of the camera.

What manufacturers had no control over was the film put in the camera; that was a technical and aesthetic choice of the photographer and was dependent upon what they were trying to achieve. Positive, negative, black and white, color, fast, or slow… all were choices that determined the final “look” that was desired and relied upon the chemical wizardry of the film manufacturer.

![]()

The Shift from Film to Digital

The move to digital has completely changed the relationship between manufacturer and photographer where “look” is relegated to the post-processing realm, be it either in-camera or later post-production. The sensor is now an integrated element whose technical specifications are critical to the overall performance of the camera.

In fact, sensors have been one of the primary focal points for manufacturers, evidenced by the megapixel space race of the early 2000s. While that has largely abated over the last 10 years, there is very much a focus on sensor performance with noise, dynamic range, and readout speed becoming increasingly important.

For example, when the Nikon D800 was launched, the 36-megapixel sensor was groundbreaking; the recent arrival of the Z9 includes a new 46-megapixel sensor which, given the intervening 10 years, represents a limited megapixel increase. Yet the two sensors couldn’t be more different in terms of noise, dynamic range, and readout speed which is where all the gains have been made.

The Z9 is a vastly better sensor that produces cleaner images, with a wider dynamic range, written to a memory card at insanely fast speeds. Noise and dynamic range are directly related to producing cleaner and richer looking images, while readout speed is about nailing not only the decisive moment but the definitive moment.

Yet perhaps the single biggest driver to this revolution in sensor design has been video. The relentless push to merge video into stills cameras — particularly led by Panasonic — has highlighted a desire of the market for this functionality. While both Nikon (with the D90) and Canon (with the 5D Mark II) were early to market with video, their capabilities (and particularly Nikon’s) have lagged behind the competition and it is only recently that they have been truly market-leading.

With the Z9, it’s worth noting that the headline-grabbing specifications of the camera have been principally based around the sensor. A fast readout speed allows the Z9 to shoot 20 fps in raw for over 1000 images, up to 30 fps with JPEGs, and a remarkable 120 fps at 11 megapixels, all with full autofocus. On top of that, the Z9 can also shoot 8k video at 60p and 8k at 30p for 125 minutes.

This isn’t to belittle the other elements of the camera’s design… balancing the offloading of bucket loads of sensor data by the Expeed 7 processor and dumping it onto the memory cards. Or the IBIS and significantly improved autofocus and subject tracking. Yet it’s the sensor that is garnering the headlines. But perhaps that’s the point: the sensor is the linchpin of the camera system and is designed not only to provide the best possible output for the given budget but to work effectively in the sensor-lens-body system.

So is it necessary to manufacture your own sensors?

A Look at the Image Sensor Market

First up, it’s worth saying that image sensor manufacturing (or fabrication) is a massively expanding, but expensive, business. The 1.5 billion smartphones sold every year are testament to that, but then throw in industrial vision systems in the robotics, automotive, medical, and science areas, and it’s not surprising that global demand is rising.

In 2019, the market was worth $17.2 billion and estimated to grow to $27 billion by 2023. In fact, because of this, in 2020 Samsung began converting an existing DRAM manufacturing line to image sensors. This isn’t an unusual step, as about 80% of the manufacturing process (and so equipment) is the same, but it was still going to require a write-down of $815 million!

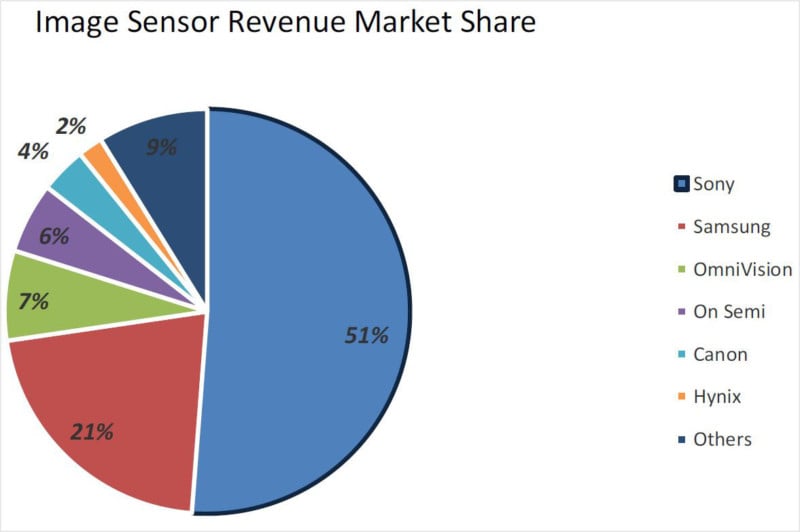

It’s fair to say that entering the fabrication business is not open to small players and, in fact, is dominated by a handful that includes (in 2019): Sony (51%), Samsung (21%), OmniVision (7%), On Semi (6%), Canon (4%), and Hynix (2%), with the remaining 9% made up of several others.

When it comes to just the smartphone market the figures are similar (in 2020): Sony (46%), Samsung (29%), and OmniVision (10%).

![]()

But what about camera manufacturers? The simplest solution is to buy an existing product off-the-shelf, which is the option many manufacturers take for their models. You know what you are getting and design your camera to fit the specifications, making it an economical approach. The downside is two-fold: you can’t optimize the sensor to your lens and camera body design specifications, and you have limited ability to incorporate the latest sensor innovations.

This approach is somewhat similar to using Intel or AMD chips inside PCs; you pick the spec you want and build a system around it. Apple identified this as an opportunity to differentiate themselves in the market and developed the M1 chip, which is manufactured by Taiwan Semiconductor Manufacturing Company (TSMC).

Alternatives to Designing and Manufacturing Sensors

Designing and manufacturing your own sensor — particularly for top-end models — is clearly beneficial, although this has to be weighed against the cost of sensor production. For this reason, manufacturers have two alternative options.

The first is to provide general specifications and constraints which are then produced to third-party designs. This is actually the way Sony Semiconductor Solutions (a subsidiary of Sony) works, rather than as a foundry that manufactures to a design that is supplied (such as Tower Semiconductor). Nikon has a long history of using Sony sensors, but it is largely agnostic as to manufacturer in order to minimize the risk of relying on one too much. In the past, it manufactured some of its own sensors, including the early LBCAST models, although it looks like it no longer has a fab facility but still retains an extensive sensor design group.

Similarly, Sigma outsources Foveon sensor production to Dongbu HiTek, Fuji uses a mix of Fuji and Toshiba sensors, Panasonic has sensors from a partnership with TowerJazz, Olympus uses a range of manufacturers, and Pentax sticks principally with Sony.

That leaves Canon, which makes a point of noting that its EOS cameras use Canon sensors (although that suggests that other models may use off-the-shelf sensors fabbed elsewhere). With around 4% of the sensor market, it is a relatively small, if important, manufacturer. And like Nikon, it also produces fabrication systems that are used by foundries.

The release of the R3 shows that Canon sensors remain at the cutting edge and demonstrate a strong three-way competitive market with Nikon and Sony.

Read more: Canon EOS R3 Review: Blazing Speed Meets Robust Body

Should Camera Manufacturers Make Their Sensors?

So do camera makers need to manufacture silicon? The overwhelming evidence is that they don’t, with only really Sony and Canon in this camp. However, what is critical is designing your own image sensors, specifically for top-end models. This offers market differentiation through the ability to directly innovate on the sensor design while also integrating it into the camera system as a whole, something we see Nikon doing quite visibly.

Perhaps “integration” is the keyword here: with the advent of the smartphone, users expect an integrated system that works holistically to produce the best image possible. Camera manufacturers have to work beyond the camera body and lens to provide a data processing chain from photo capture through to a processed image that is delivered in the way the end-user wants.

It may be hard to understand for photographers, but the camera may well have become incidental to the photographic process. It’s what you then do to the raw image that is critical and camera manufacturers are coming under increasing pressure from smartphones, which are far better able to manage these expectations.

Has the image sensor become the defining component of the camera? Quite possibly so.

Image credits: Photos licensed from Depositphotos