The History of Autofocus: From Rangefinders to AI Subject Recognition

![]()

Autofocus is one of the great invisible revolutions in photography. When it works well, it disappears. A photographer raises the camera, half-presses the shutter, sees a box snap to an eye, a bird, a car, or a face, and the lens silently moves to the right position.

In the best modern systems, the camera does not simply focus; it can recognize, predict, track, and compensate. It can follow a soccer player running toward the lens, a bird crossing an uneven background, a model turning her face away and back again, or a child moving unpredictably across a room. The result feels effortless, but that ease is the product of nearly half a century of development.

Autofocus is not one technology. It is a chain of technologies working together. A camera must determine whether the subject is in focus, decide which direction focus must move, calculate how far to move the lens, activate a motor, confirm the result, and, in continuous autofocus, repeat that process many times per second while the subject and photographer may both be moving. The history of autofocus is therefore not merely the history of sharper snapshots. It is the history of sensors, motors, processors, lens mounts, mechanical couplings, optical theory, and eventually machine learning.

The road from the earliest autofocus compact cameras to today’s AI-driven mirrorless bodies was not straight. Early systems used active infrared or ultrasonic ranging. Film SLRs used dedicated phase-detection modules. Compact digital cameras often relied on contrast detection from the imaging sensor. DSLRs refined dedicated AF modules to a high art, while mirrorless cameras eventually absorbed autofocus into the image sensor itself. At the same time, lens motors evolved from noisy screw-drive systems and geared micromotors into ultrasonic, stepping, linear, and voice-coil motors capable of astonishing speed and precision.

At a Glance

Before Autofocus: Manual Focus and Rangefinder Thinking

Before cameras could focus themselves, photographers relied on several manual methods. View cameras used ground glass. Rangefinder cameras used coupled rangefinder mechanisms. SLRs used focusing screens, split-image prisms, and microprisms. Each method had strengths, but all depended on human judgment.

The rangefinder is especially important to the history of autofocus because many early autofocus ideas were, in effect, automated rangefinders. A traditional coupled rangefinder compares two views of the subject from slightly different positions. When the two images align, the lens is focused at the corresponding distance. The photographer performs the comparison visually. An autofocus system can do something similar electronically: compare two images, calculate the displacement between them, and move the lens until the displacement indicates focus. Indeed, the Contax G1 and G2 did precisely this and managed to wrangle the title of the world’s only autofocus rangefinder system.

This basic idea — using geometry to determine focus distance — runs through much of autofocus history. Phase-detection autofocus in SLRs is not the same thing as a coupled rangefinder, but the underlying logic has a family resemblance. The camera compares light coming from different parts of the lens and uses the difference to infer whether focus is in front of or behind the subject.

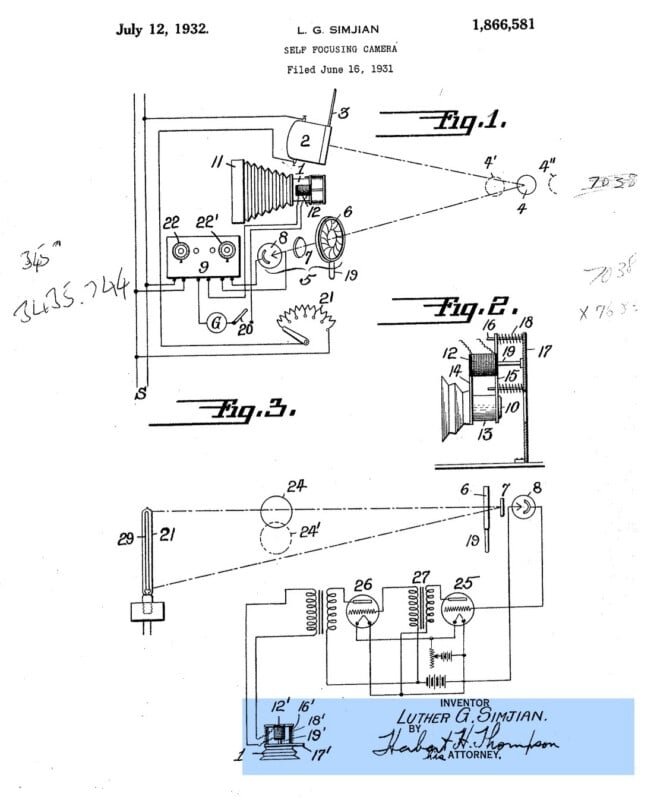

Early Experiments and the Search for Automation

The desire to automate focusing predates the consumer cameras that made autofocus famous. Before the postwar race toward practical autofocus systems, one of the earliest known autofocus ideas appeared in a 1931 patent filed by Armenian-American inventor Luther George Simjian for a “self-focusing camera.” Simjian was already working in the strange borderland between photography, automation, optics, and self-portraiture. In 1929, he had patented a “pose-reflecting” photographic apparatus, which became the PhotoReflex: a self-photographing camera that allowed the subject to see and compose their own portrait before exposure. That invention led him toward the next logical question: if a camera could help the subject pose without a photographer behind it, could the camera also focus itself? His self-focusing camera patent, filed in June 1931 and granted in July 1932, did not create the modern autofocus industry by itself, but it is an important conceptual starting point because it framed focus as something a camera mechanism could determine rather than something only a human operator could judge.

By the 1960s and 1970s, several manufacturers were experimenting with ways to make cameras focus automatically. Leica developed and demonstrated autofocus concepts, including systems that anticipated later through-the-lens focusing methods. These prototypes showed that autofocus was technically possible, but they were not yet commercially practical.

The challenge was not simply inventing a focus sensor. A successful autofocus system had to be compact, affordable, reliable, fast, and compatible with real photographic use. It had to work in imperfect light. It had to avoid excessive battery drain. It had to move the lens accurately without making the camera huge or fragile. It also had to persuade photographers that giving up manual focus control was not a gimmick.

This last point mattered. Serious photographers did not immediately embrace autofocus. Many early systems were slow, noisy, limited to the center of the frame, and easily confused. Professional photographers had good reasons to trust their eyes and hands more than crude electronics. Autofocus had to earn its place.

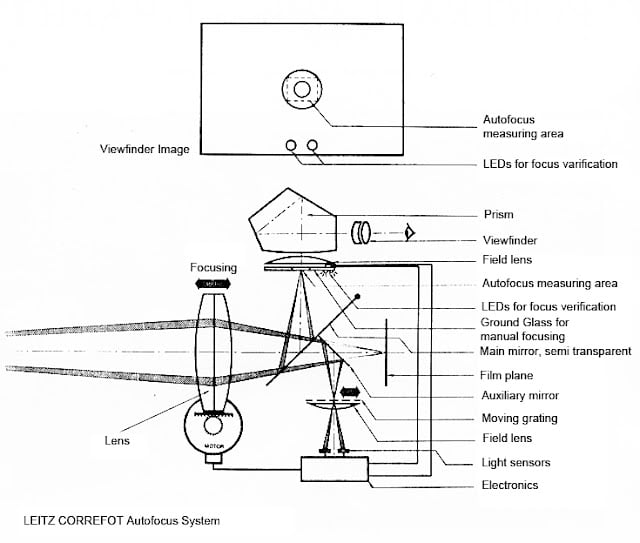

In 1976, Leica produced one of the most famous pre-commercial autofocus prototypes: the Correfot system, which was shown at Photokina that year. Leica’s prototype used a 50mm lens fitted with a servo motor and a contrast-detection arrangement assisted by two LEDs above the viewfinder; the system looked for maximum subject contrast and then drove the focusing ring automatically. It was large, complex, and not ready to become a consumer product, but it showed how close the industry was getting to the modern idea of autofocus: not merely estimating distance, but using image information to let the camera itself decide where sharpness should be.

The First Commercial Successes: Compact Autofocus Cameras

The first major autofocus breakthrough came in compact cameras rather than professional SLRs. The Konica C35 AF, introduced in 1977, is widely recognized as the first commercially successful production autofocus camera. It used Honeywell’s Visitronic system, which worked by comparing images in a rangefinder-like arrangement and setting the lens accordingly. This was not through-the-lens autofocus in the later SLR sense, but it solved the problem that mattered most for casual photographers: point the camera, press the button, and get a reasonably sharp picture.

The importance of the Konica C35 AF is easy to underestimate. It was not a high-performance professional tool, but it proved that autofocus could be desirable in the mass market. For the average user, manual focusing was not a satisfying craft skill; it was a way to miss pictures. Autofocus turned the compact camera into something closer to a true point-and-shoot device.

Polaroid made another major early contribution with sonar autofocus. The Polaroid SX-70 Sonar OneStep, introduced in the late 1970s, used ultrasonic sound pulses to measure distance. The camera emitted a sound pulse, measured the time it took to return, and set focus based on the distance. This was an active autofocus system: instead of analyzing the image, the camera measured the subject’s distance directly.

Sonar autofocus was clever, fast, and well-suited to instant photography, but it also showed the limits of active focusing. The camera could be fooled by glass, foreground obstacles, or subjects that did not reflect the signal as expected. It measured distance to whatever reflected the pulse, not necessarily the subject the photographer intended. Still, it was a crucial step. It showed that autofocus did not have to imitate the human eye. It could use entirely different sensing methods.

Active Autofocus: Infrared, Sonar, and Triangulation

Early compact autofocus systems often used active methods. These systems sent something out into the scene—usually infrared light or ultrasonic sound—and measured a return signal. Some systems used triangulation, comparing reflected infrared light from different angles. Others measured time-of-flight, as in Polaroid’s sonar system.

The advantage of active autofocus was simplicity. It did not require the camera to analyze image sharpness. It could work even when the lens was not transmitting much light to a sensor. It was also relatively fast for snapshot use. The camera could measure subject distance and move the lens to a preset position.

The disadvantages were equally clear. Active AF often focused only near the center of the frame. It could be confused by reflective or transparent surfaces. It could focus on something between the camera and the intended subject. It was generally less suitable for long lenses, interchangeable lenses, and complex professional photography. Active AF was good at estimating distance, but it was not truly evaluating the image that would be recorded.

As cameras became more sophisticated, passive autofocus became dominant. Passive systems use light from the scene itself. They do not emit a signal. Instead, they analyze the image or the light coming through the lens. Phase detection and contrast detection are both passive autofocus systems, and together they form the technical backbone of modern AF.

The First Autofocus SLRs

Bringing autofocus to the 35mm SLR was far more difficult than adding it to a compact camera. An SLR has interchangeable lenses, a reflex mirror, and a through-the-lens viewing path. Any autofocus system had to work with different focal lengths, different maximum apertures, different focusing mechanisms, and different optical designs.

The Pentax ME-F, introduced in 1981, is generally considered the first autofocus 35mm SLR. It used focus detection in the camera body but required a special motorized zoom lens. Nikon followed with the F3AF in 1983, another transitional camera that adapted a professional manual-focus SLR architecture for autofocus use with special AF lenses.

These cameras were historically important, but they were not yet the moment when autofocus took over. They were awkward hybrids. They demonstrated that autofocus could be applied to an SLR, but they also showed that merely attaching autofocus to an existing manual-focus system was not enough. A truly successful autofocus SLR needed to be designed as an integrated AF camera from the beginning.

Minolta and the Autofocus SLR Revolution

The real breakthrough came in 1985 with the Minolta Maxxum 7000, partly built on the technology of the Leica Correfot, which Leitz had sold to Konica Minolta after the unsuccessful debut at Photokina.

This was the camera that made autofocus feel modern. It integrated autofocus, autoexposure, motorized film advance, and a new lens system into a coherent package. Unlike earlier experimental or transitional AF SLRs, the Maxxum 7000 was not a manual-focus camera with autofocus attached. It was an autofocus camera by design.

Minolta’s system used a motor in the camera body to drive the focusing mechanism in compatible lenses. This screw-drive approach kept lenses relatively simple and allowed the camera body to provide the motor power. The Maxxum system was a commercial and technological shock. It established autofocus as the future of the SLR market almost overnight.

The Maxxum 7000 also changed the competitive balance of the camera industry. Minolta, which had been respected but not dominant in the professional SLR market, suddenly looked like the autofocus leader. Nikon, Canon, Pentax, and others had to respond quickly. Autofocus was no longer a novelty; it had become a system-level demand.

Nikon’s Autofocus Path: Compatibility and Professional Refinement

Nikon’s approach to autofocus was shaped by its commitment to the F mount. The company had a large base of professional users and a deep lens system. Abandoning compatibility would have been risky. Instead, Nikon introduced autofocus while preserving as much continuity as possible.

Early Nikon AF SLRs used body-driven screw-drive autofocus. A motor in the camera body turned a mechanical coupling that moved the focusing elements in the lens. This allowed many autofocus lenses to remain mechanically tied to the camera body rather than carrying their own motors. It was a practical and conservative solution, especially for a company with a professional system to protect.

Nikon’s autofocus matured through cameras such as the F-501/N2020, F4, and F5. The F4 was particularly important because it bridged the manual and autofocus eras. It supported autofocus while retaining complete compatibility with all manual Nikon lenses (even non-AI). The F5 later represented the high-performance professional film SLR at its most advanced: fast motor drive, sophisticated metering, rugged construction, and autofocus suitable for sports, action, and photojournalism.

Nikon eventually moved toward in-lens motors with AF-I and then AF-S lenses using Silent Wave Motor technology. These lenses improved speed and quietness, especially for telephoto and professional applications. But Nikon’s long use of screw-drive autofocus remains one of the defining examples of how legacy lens mounts shaped autofocus history.

Canon EOS and the Fully Electronic Future

Canon made the boldest move of the autofocus era. After experimenting with autofocus in the FD system (e.g., the T80 and T90), Canon decided that its older mount was not the best foundation for the future. In 1987, Canon introduced the EOS system and the EF mount. This was a clean break. EF lenses were not mechanically compatible with FD cameras, and FD lenses could not simply carry forward into the EOS future.

The decision angered some existing Canon users, but it was technically brilliant. The EF mount was fully electronic. There was no mechanical aperture linkage and no mechanical autofocus screw-drive connection. Every EF lens contained its own autofocus motor. Starting with the EOS 650 in 1986, communication between body and lens was now entirely electronic.

This gave Canon an enormous long-term advantage. Lens motors could be optimized for each lens. Electronic aperture control simplified communication. The mount had room to grow. Canon’s professional EF lenses, especially those with ring-type Ultrasonic Motor technology, became famous for fast, quiet autofocus. The EOS-1 series became a dominant professional platform in sports, wildlife, and photojournalism.

Canon’s break with the past foreshadowed the future of all major camera systems. Modern mirrorless mounts from Canon, Nikon, Sony, Fujifilm, Panasonic, and others are all deeply electronic. Mechanical couplings are gone. The lens and body communicate continuously. Focus is no longer merely a motor command; it is a data exchange.

How Phase-Detection Autofocus Works

Phase-detection autofocus became the defining technology of film SLRs and DSLRs. In a traditional SLR or DSLR, the main mirror reflects most light upward to the viewfinder, but some light passes through a semi-transparent portion of the mirror to a secondary mirror. That secondary mirror directs light down to a dedicated autofocus module at the bottom of the camera.

The AF module receives paired images formed from light coming through different parts of the lens. If the subject is in focus, the paired images align. If they do not align, the system can determine whether focus is in front of or behind the subject. This is the great advantage of phase detection: it knows direction. It can tell the lens not merely that focus is wrong, but which way to go.

This made phase detection much faster than traditional contrast detection for action photography. A phase-detection system can command the lens to move directly toward focus rather than hunting back and forth. It can also predict subject motion by measuring changes over time. That is why phase detection became so important for sports, wildlife, and professional news photography.

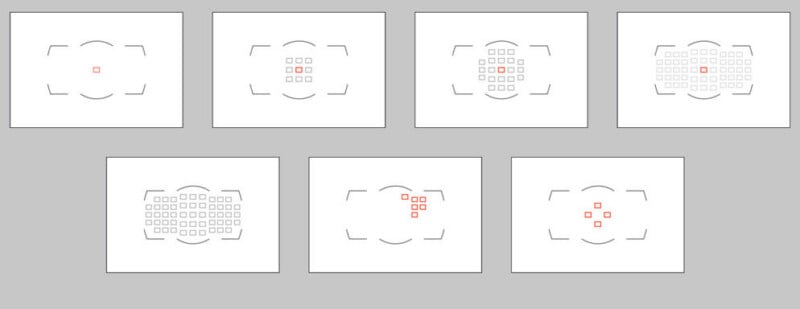

Over time, phase-detection systems gained more AF points, better low-light sensitivity, cross-type sensors, and more advanced tracking logic. A cross-type AF point can detect contrast in both horizontal and vertical orientations, making it more reliable than a single-axis point. Some AF points are optimized for lenses of certain maximum apertures, such as f/2.8, where the wider cone of light allows more precise focus measurement.

The DSLR era represented the peak of dedicated autofocus modules. Cameras like the Nikon D3, D4, D5, Canon EOS-1D series, and later high-end enthusiast bodies had extremely sophisticated AF systems. They could track subjects at high frame rates, analyze motion, and coordinate autofocus with metering sensors (AF-C on Nikon, Sony, and Pentax, AI Servo on Canon).

These systems were highly specialized. Professional DSLRs often had central clusters of AF points, with the most sensitive points near the middle of the frame. This reflected the physical limitations of the mirror and AF module. The dedicated AF sensor could not easily cover the entire image area. Still, within that central region, mature DSLR autofocus was astonishingly capable.

![]()

![]()

The weakness was calibration. The dedicated AF module was not the imaging sensor. It lived in a separate optical path. If the mirror alignment, AF module position, lens calibration, or body tolerances were slightly off, the camera could front-focus or back-focus. This was especially obvious with fast lenses at wide apertures. DSLR users became familiar with AF micro-adjustment, a feature that allowed photographers to compensate for systematic focus errors.

When everything was calibrated properly, DSLR phase detection was superb. But the system’s reliance on a separate focus path was always a compromise. Mirrorless cameras would eventually solve this by focusing directly on the imaging sensor.

Contrast-Detection Autofocus and the Digital Compact Era

While SLRs and DSLRs relied on phase detection, digital compact cameras and early mirrorless cameras often used contrast-detection autofocus. Contrast detection works by analyzing the image on the sensor and looking for maximum contrast. A sharply focused edge has higher local contrast than a blurred one. The camera moves the lens until contrast peaks.

The great advantage of contrast detection is accuracy. Because it uses the imaging sensor itself, it evaluates the actual recorded image. There is no separate AF module to calibrate. If contrast is maximized on the sensor, the subject is focused on the sensor.

The weakness is that contrast detection does not inherently know direction. If the image is blurry, the camera may not know whether the lens should move closer or farther. It has to try one direction, check whether contrast improves, and reverse if necessary. This produces the familiar hunting behavior of many compact cameras and early mirrorless bodies.

Contrast detection can be very accurate for still subjects, landscapes, product photography, and tripod work. But for moving subjects, especially subjects coming toward or away from the camera, traditional contrast detection struggles. It is reactive rather than predictive.

Panasonic DFD and Smarter Contrast AF

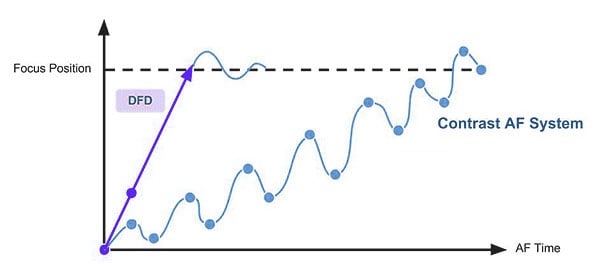

Panasonic developed one of the most interesting alternatives to conventional contrast detection: Depth From Defocus, or DFD. Rather than relying purely on trial-and-error contrast hunting, DFD compares defocused images and uses knowledge of the lens’s blur characteristics to estimate distance and focus direction.

In simple terms, Panasonic tried to make contrast autofocus smarter by giving the camera a model of how each compatible lens renders out-of-focus blur. By comparing two images with different defocus patterns, the camera could estimate how far and in what direction to move the lens. This made single-shot autofocus very fast in many Panasonic cameras.

DFD was a clever technical solution, but it also revealed the industry’s direction. The future belonged to systems that could determine focus direction from the imaging sensor itself. Most manufacturers eventually moved toward on-sensor phase detection. Panasonic, after holding out longer than others in some lines, also eventually adopted phase-detection autofocus in newer mirrorless models.

On-Sensor Phase Detection and the Mirrorless Breakthrough

The great breakthrough in mirrorless autofocus was on-sensor phase detection (ONPDAF). Instead of using a separate AF module beneath a reflex mirror, the camera places phase-detection pixels directly on the imaging sensor. These pixels allow the camera to determine the direction of focus while evaluating focus at the actual image plane. The first camera to do this was, I believe, the Fujifilm FinePix F300EXR in 2010. The F300EXR used Fuji’s fascinatingly innovative SuperCCD sensor design, but also included some very tiny additional photosites dedicated to phase-detection autofocus.

The following year, Nikon debuted its Nikon 1 series of Type-1 mirrorless cameras, which were unbelievably performant for their time. The OSPDAF in the Nikon 1 V1 made it the first interchangeable lens camera (ILC) with such a feature. The year after that (2012), Sony released the NEX-5R, and the year after that, Sony cross-licensed their patent portfolio with Aptina (Aptina developed the Nikon 1 sensors).

On-sensor phase detection autofocus solved two huge problems at once. First, it gave mirrorless cameras the directional speed of phase detection. Second, it eliminated the DSLR calibration problem because autofocus was measured on the same sensor that records the image. No mirror alignment. No separate AF module. No front-focus or back-focus caused by a mismatched optical path.

Early on-sensor phase-detection systems were not perfect. They could have limited coverage, striping artifacts in extreme cases, reduced performance in low light, or less reliable tracking than professional DSLRs. But they improved rapidly. As sensor readout became faster and processors more powerful, mirrorless AF went from adequate to excellent.

Sony played easily the most significant role in popularizing high-performance mirrorless autofocus, especially with its Alpha series. Cameras like the Sony a6000 (widely considered the best-selling mirrorless camera of all time, still in production 12 years after its release), Sony a9, Sony a7 III, and, later, the Sony a1 helped demonstrate that mirrorless cameras could not only match DSLRs but also surpass them in some ways.

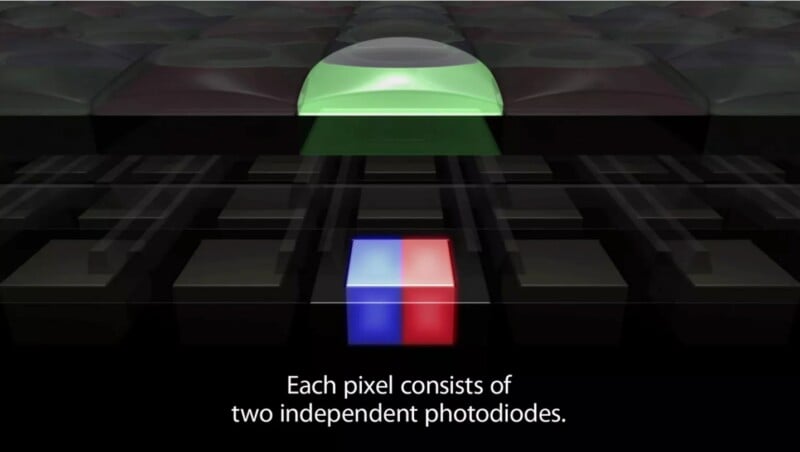

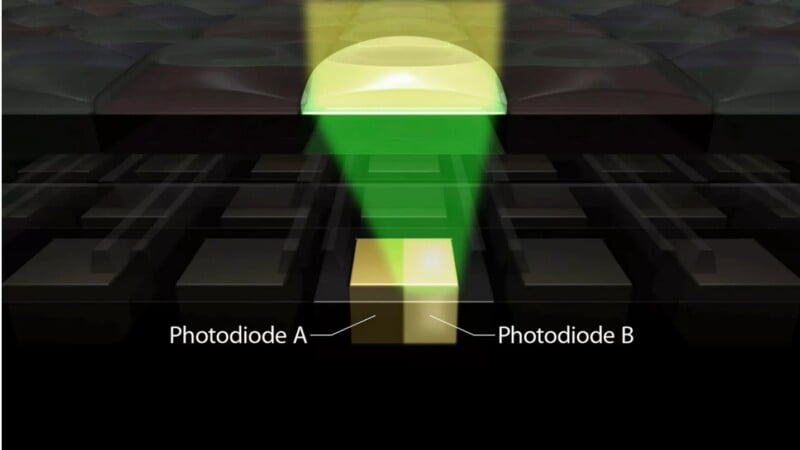

Canon’s Dual Pixel CMOS AF, first introduced in July of 2013 with the EOS 70D, was another landmark — though one whose technology never really spilled beyond the walls of Canon — because it used paired photodiodes across the sensor, allowing smooth, confident phase-detection autofocus for stills and video.

Dual Pixel AF became especially important for video shooters because it produced natural focus transitions without the constant hunting associated with contrast detection. DPAF alone made cinema cameras like the Canon C200, C300 Mark II and III, and hybrid stills/video models like the 5D Mark IV, 90D, and 1DX Mark III, some of the most popular camera models on documentary and reality-TV shoots, due to their (at the time) very advanced ability to smoothly follow focus on subjects.

Face Detection, Eye Detection, and Subject Recognition

Once autofocus moved onto the imaging sensor, cameras could do more than measure focus. They could analyze the image itself. Face detection was an early step. Instead of asking the photographer to place an AF point over a face, the camera could identify faces in the frame and prioritize them.

Eye detection was even more important. With fast lenses and high-resolution sensors, focusing on the face is not always enough. At f/1.2 or f/1.4, the difference between eyelashes, iris, and ear can be visible. Eye AF changed portrait photography by allowing the camera to identify and track a subject’s eye directly. This made shallow-depth-of-field portraiture more reliable, especially with mirrorless cameras where the AF point could cover much more of the frame.

Modern cameras go further. They can recognize humans, animals, birds, insects, cars, motorcycles, trains, airplanes, and sometimes specific body parts such as heads or eyes. This is often described as AI autofocus, though I’d prefer the term “machine learning,” given how often “AI” is demonized in general in the wake of generative AI like ChatGPT or Gemini. In practical terms, the camera has been trained to identify subject patterns and prioritize focus accordingly.

This is a major philosophical shift. Traditional autofocus asked: is the selected area sharp? Modern autofocus asks: what is the subject, where is the important part of it, how is it moving, and where will it be when the shutter opens?

Predictive Autofocus and Continuous Tracking

Continuous autofocus is one of the hardest tasks in camera design. A subject moving toward the camera changes focus distance constantly. If the camera only focuses on where the subject was a fraction of a second ago, the photo may be soft. The system must predict where the subject will be at the moment of exposure.

Film SLRs and DSLRs developed predictive AF algorithms for sports and action. The camera measured focus distance repeatedly, calculated subject speed and direction, and drove the lens to a predicted position. This became essential for high-speed motor drives.

Mirrorless cameras added new possibilities because the imaging sensor could provide more information more often. A modern camera can combine phase-detection data, subject-recognition data, gyro information, lens-position feedback, and image analysis. It can track a subject across much of the frame rather than only within a central AF-point cluster.

The practical result is that modern autofocus is less dependent on the photographer’s ability to keep a single AF point perfectly placed. The photographer still matters, but the burden has shifted. Instead of manually maintaining focus, the photographer chooses AF modes, subject types, tracking sensitivity, and composition while the camera handles much of the mechanical execution.

Autofocus Lens Technology

Screw-Drive Systems

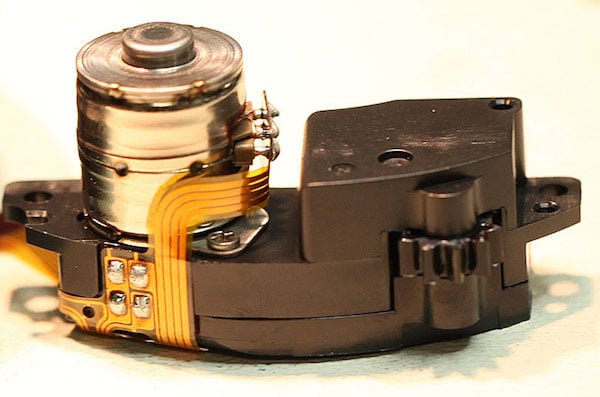

The history of autofocus motors is as important as the history of AF sensors. Early SLR autofocus systems often used screw-drive mechanisms. In a screw-drive system, the motor is in the camera body. A mechanical shaft or coupling turns a screw mechanism in the lens, moving the focusing group.

Screw-drive autofocus had clear advantages. It kept lenses cheaper and simpler. It allowed manufacturers to build autofocus into bodies while maintaining a degree of compatibility with existing mount architecture. It could also be quite fast with the right body and lens combination.

But screw-drive AF was noisy and mechanically limited. The body motor had to drive many different lenses with different focusing masses. Fine control was more difficult. The system was not ideal for video, where smooth and silent focusing became important. Screw-drive autofocus belongs to the mechanical-electronic transition era: effective, sometimes impressively fast, but not elegant by modern standards.

Micromotors and Geared Lens Motors

Another early solution was the in-lens micromotor. These small DC motors used gears to move the focusing elements. They were common in consumer autofocus lenses because they were inexpensive and easy to package.

Micromotor lenses were often slower and noisier than professional ultrasonic lenses. They could also feel less refined during manual focus. But they played an important role in making autofocus affordable. Not every lens needed a sophisticated ring-type ultrasonic motor. For slow kit zooms and inexpensive primes, geared micromotors were good enough.

Even today, some low-cost lenses use relatively simple motor systems. The difference is that expectations have changed. Modern users increasingly expect quiet focus, video compatibility, and precise continuous AF. That has pushed even consumer lenses toward stepper or linear motors.

Ultrasonic Motors

Ultrasonic motors — a type of piezoelectric motor — were a major leap in autofocus actuation. Canon’s USM, Nikon’s SWM, Sigma’s HSM, Tamron’s USD, and similar systems use high-frequency vibration to produce motion. Ring-type ultrasonic motors became especially important in professional lenses because they could be fast, quiet, and powerful.

A good ring-type ultrasonic motor can move large focusing groups quickly. It can also allow full-time manual override, meaning the photographer can adjust focus manually without switching the lens out of AF mode. This became a hallmark of professional autofocus lenses.

Ultrasonic motors were especially important for telephoto lenses. Sports and wildlife photographers needed fast focusing with large glass elements. Body-driven screw systems could work, but in-lens ultrasonic motors allowed the lens itself to be optimized for the task.

For many photographers, the rise of ultrasonic motors marked the point when autofocus stopped feeling like a consumer convenience and became a professional tool.

Stepping Motors

Stepping, or stepper, motors became increasingly important with live view and then mirrorless cameras. A stepping motor moves in precise increments, making it well-suited to smooth, controlled focus transitions. Canon’s STM lenses and Nikon’s AF-P lenses are well-known examples.

Stepping motors are not always the fastest solution for high-end sports photography, but they are often quiet, compact, and smooth. That makes them valuable for video. In video, focus behavior is judged differently than in still photography. A stills lens can snap abruptly into focus and be considered excellent. A video lens or hybrid lens must avoid visible hunting, pulsing, or sudden jumps.

The rise of hybrid stills/video cameras changed autofocus motor priorities. Photographers wanted speed. Videographers wanted smoothness and silence. Modern lens design often has to satisfy both.

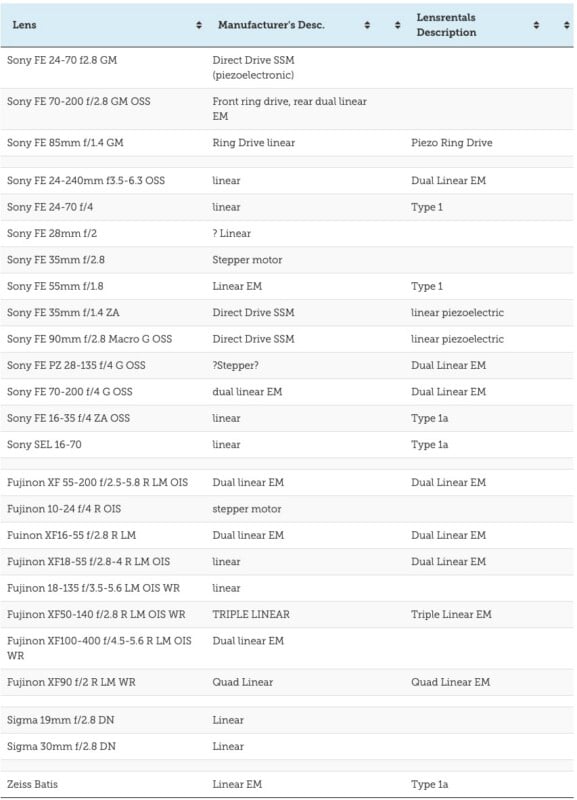

Linear Motors, Voice-Coil Motors, and Modern Mirrorless Lenses

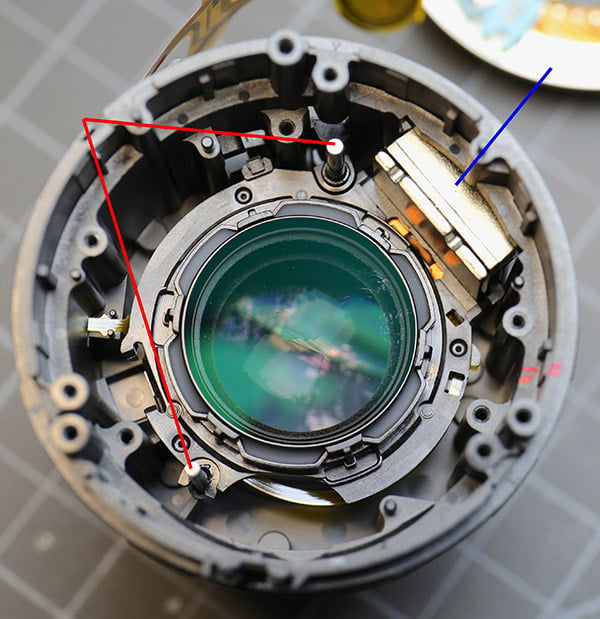

Modern mirrorless lenses increasingly use linear motors, voice-coil actuators, and other electromagnetic focusing systems that differ significantly from older SLR and DSLR autofocus mechanisms. In many earlier lenses, the motor produced rotational movement, which then had to be translated into linear movement through gears, cams, helicoids, or threaded mechanisms. In other words, autofocus often worked by motorizing a version of the same mechanical motion used in manual-focus lenses.

Linear and voice-coil systems (VCM is used in smartphones, as well) are more direct. Instead of rotating a helicoid, an electromagnetic actuator moves a focusing group forward or backward along guides or rails. The basic principle is similar to a loudspeaker voice coil: controlled electrical current interacts with magnets to create motion. In a lens, that motion moves a small optical group along the optical axis. This can reduce mechanical complexity, backlash, gear noise, and the inertia associated with older drive systems.

That direct movement is especially useful for mirrorless autofocus. Modern cameras make constant tiny focus corrections using on-sensor phase detection, contrast confirmation, subject tracking, eye detection, and video AF. The lens must be able to move quickly, stop accurately, reverse direction, and settle without overshooting. Linear motors are well suited to that kind of rapid, repeated adjustment. They are also typically very quiet, which matters far more now that still cameras are also expected to be serious video cameras.

These systems also give optical designers more flexibility. Instead of moving a large front group or an entire optical block, many modern lenses move one or more small internal focusing groups. Some high-end lenses use multiple motors to control different groups independently, improving close-focus performance, reducing aberrations, or maintaining sharpness across the focus range. In these designs, autofocus is not simply attached to the optical formula; it becomes part of how the lens performs optically.

There are tradeoffs. Linear and voice-coil systems depend on precise guides, position sensors, magnets, coils, flex cables, and firmware. A “linear motor” is not automatically superior to a ring-type ultrasonic motor in every lens. Execution matters. A well-designed ultrasonic telephoto can still be excellent, while a poorly implemented linear system can be fragile, inconsistent, or difficult to repair.

The broader point is that modern autofocus performance is now a body-lens partnership. The camera identifies the subject, measures focus error, predicts motion, and sends commands; the lens reports position and moves its focusing group with extreme precision. Without fast, quiet electromagnetic motors, the best mirrorless AF algorithms would have no way to turn their intelligence into sharp photographs.

From Focusing Aid to Photographic Intelligence

The history of autofocus is the history of cameras becoming more aware of the scene in front of them. Early systems merely estimated distance. SLR phase detection learned to identify focus direction. Contrast detection used the imaging sensor itself to confirm sharpness. On-sensor phase detection combined speed and accuracy. Subject recognition added semantic understanding: not just “this is sharp,” but “this is the eye,” “this is the bird,” “this is the car,” “this is the person the photographer probably cares about.”

Autofocus began as a convenience for people who did not want to focus manually. It became a professional necessity for sports and action. Today, it is one of the central battlegrounds of camera design. The best autofocus systems do not merely move glass quickly. They interpret the image, predict the future, and collaborate with the photographer’s intent.

Manual focus is still valuable. It remains essential for certain kinds of cinema, macro work, technical photography, and deliberate creative practice. But autofocus has become one of the defining achievements of modern camera engineering. It turned focusing from a purely mechanical act into an electronic, optical, computational, and increasingly intelligent process.

The camera has not replaced the photographer’s eye, but it has become far better at helping the photographer keep that eye sharp.

Image credits: Elements of header photo licensed via Depositphotos.com.