Imgur is Banning Explicit Content and Wiping Images Not Linked to Users

![]()

Popular photo and GIF hosting website Imgur has announced that it will change its Terms of Service, to bring it closer to its Community Rules and will ban sexually explicit content and delete anonymously uploaded images.

“Our new Terms of Service will go into effect on May 15, 2023. We will be focused on removing old, unused, and inactive content that is not tied to a user account from our platform as well as nudity, pornography, and sexually explicit content,” Imgur explains.

Engadget reports that Imgur will work to remove pornographic content and nudity even if it is tied to an active user account. For Reddit users sharing sexually explicit content across the website’s many pornographic subreddits, Imgur has long been the go-to upload service because Reddit itself doesn’t allow users to upload explicit content directly to the site.

This isn’t the first time Imgur has cracked down on porn on its site. In 2019, the company stopped displaying content associated with not-safe-for-work (NSFW) Reddit communities. All labeled NSFW content uploaded to Imgur was hidden, although it wasn’t moved or deleted.

At that time, Imgur called the decision “difficult” and explained that pornographic images being displayed and easy to find on Imgur put the website’s “user growth, mission, and business at risk.”

With its recent decision to prohibit nude content altogether, Imgur again claims business interests as a motivating factor.

“Explicit and illegal content have historically posed a risk to Imgur’s community and its business, disallowing explicit content will allow Imgur to address these risks and protect the future of the Imgur community.”

Even after taking steps to make porn more challenging to find on Imgur and ensuring that people wouldn’t be exposed to it against their will or by accident, Imgur still let it exist for a few years. It’s possible that Imgur’s newfound vigor for cleaning its website points toward the possibility that the company is preparing for a sale or intends to go public.

That’s speculation, of course, but this is the type of action a company takes to make itself more commercially appealing. Imgur itself says it — explicit and illegal content has long posed a risk to the company’s business interests.

Of course, not all pornographic content is “illegal;” much of it is simply “explicit.” However, the dividing line between the two can be blurry, especially if an Imgur user uploads content that appears legal but is stolen.

“Revenge porn” is a serious concern, an issue that Facebook and Instagram took steps to deal with in 2021. Imgur may also struggle with the ongoing issue of not knowing if someone uploading explicit content is an adult. Facebook and Instagram recently announced moves to deal with this issue, with their new Take it Down platform designed to proactively prevent young people’s intimate images from spreading online.

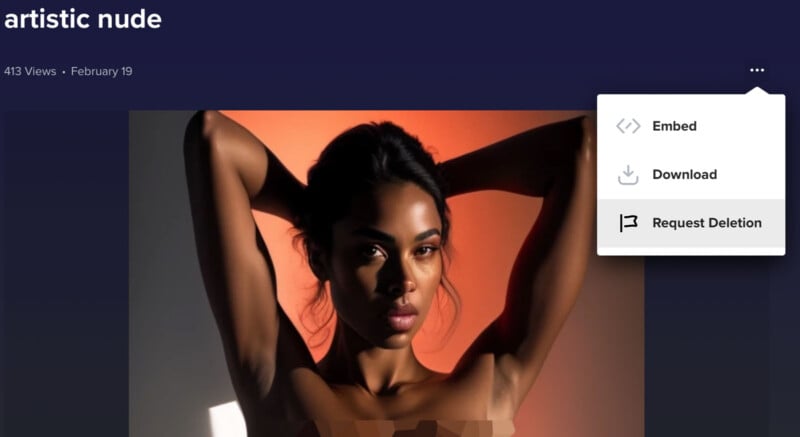

To contend with explicit and illegal content, Imgur uses a mix of automated detection and human review and moderation. It remains to be seen how this moderation approach will handle artistic nudity, which Imgur notes will remain permitted on the site.

Determining precisely what constitutes “artistic” nudity will challenge automatic and manual moderation. Imgur expects that in the early stages of the upcoming change, images will be automatically flagged that should have been allowed. Users will not be banned for automatic flags, but their content may not be visible.

“We understand that these changes may be disruptive to Imgurians who have used Imgur to store their images and artwork. These changes are an important step in Imgur’s continued efforts to remain a safe and fun space on the internet,” Imgur says.

Users have nearly a month to track down their favorite images not associated with a user account — or their favorite porn — and save it. The content will soon be wiped from Imgur. While some users may hesitate to shed a tear for lost memes and deleted pornography, the general issue of disappearing digital content affects everyone.