Startup Uses Anamorphic Lenses to Improve Smartphone Image Quality

![]()

Improving the performance of smartphone cameras often feels like approaching the end of an exponential curve: it can be done, but improvements are becoming less noticeable. Glass Imaging wants to change that through the novel use of anamorphic lenses.

Smartphone camera development has felt as though it is peaking because the ways to improve imaging generally require making a sensor bigger, which necessitates larger and more complex optics. To get around the current limitations and avoid addressing those two major factors, many companies are using computational photography algorithms to “cheat” physics, but even that has resulted in diminishing returns, Glass Imaging asserts.

In an interview with TechCrunch, Glass Imaging CEO and co-founder Ziv Attar says that up until around five years ago, smartphone companies started to make larger sensors and wider lenses, but even with noise reduction algorithms the resulting imagery using this method ends up looking “weird and fake.”

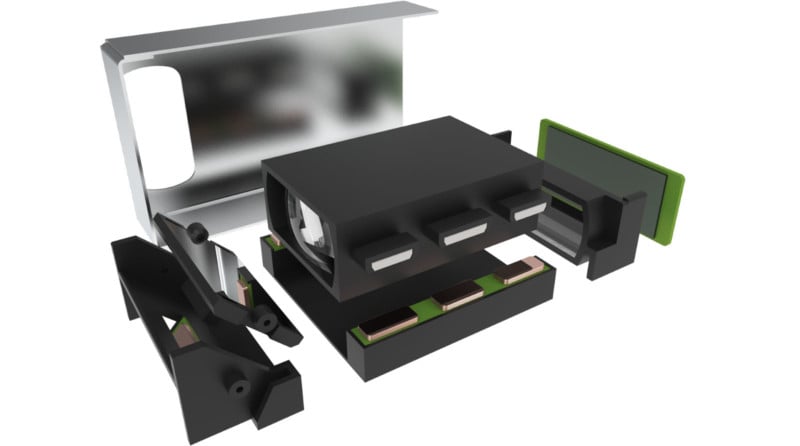

Glass Imaging has proposed a novel approach to solving this problem. Since sensors, which are rectangular but pretty nearly square in form factor, need to get bigger but there is not enough room to accommodate the larger optics which would need to go along with them, Glass wants to instead change the aspect ratio of the smartphone sensor.

Inspired by Anamorphic Lenses

The proposed solution would see the sensor dramatically elongated, from what is found in an iPhone of 7x5mm to a 24x8mm ultra-wide format. The sensor is five to six times larger which would result in better resolution, light gathering capability, dynamic range, and real optical bokeh.

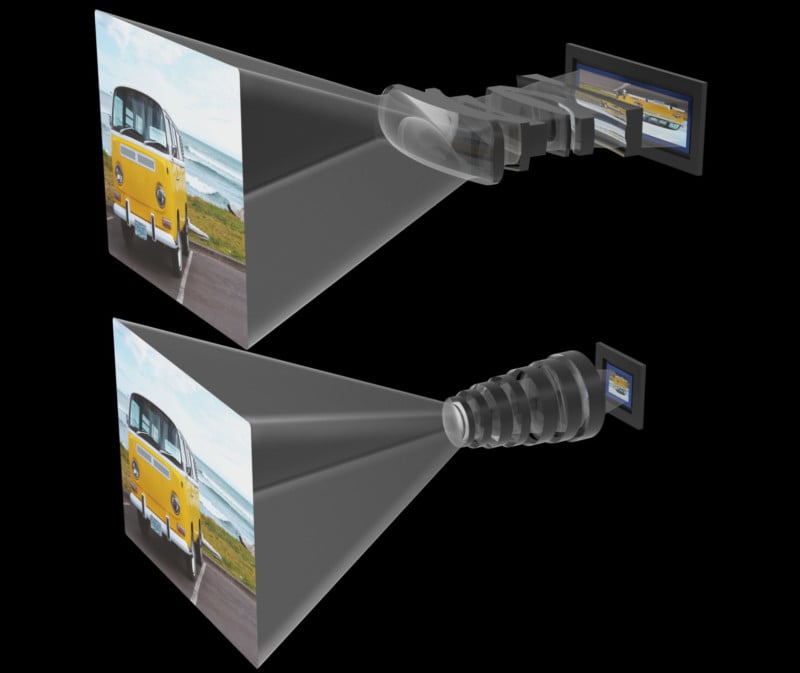

To take advantage of the now unusual shape of the proposed sensor, Glass wants to leverage the concepts that are behind anamorphic lenses. Anamorphic refers to a cinematography technique of shooting for widescreen onto standard 35mm film or sensors. The lenses squeeze the larger field of view to fit on that sensor and then the footage is de-squeezed in post-production to create a wider aspect ratio.

Glass proposes a similar methodology to be used in smartphones, but in reverse. By making a sensor longer — that is to say, more of a rectangle — a longer, rectangular lens would be needed to record footage. The plan here is to do that, but reverse the typical anamorphic process of de-squeezing a compressed image and instead squeeze a stretched image into a traditional format.

Once the photo was resized into the correct aspect ratio it would be 24x16mm, just shy of an APS-C sized sensor, which is how the company is able to claim 11 times the imaging area of an iPhone.

There are downsides. One is that there would be no way to optically zoom in this manner, but Glass Imaging says that digital zoom on this much larger sensor would still outperform the optical zoom found on most smartphone systems on the market today. Another issue is autofocus, which is very different with this system than on traditional ones. Finally, the optical system employed here introduces a large amount of distortions and aberrations. Glass Imaging seems to believe it can remove all those with software, however.

“[Distortions] are all constrained during design such that we know in advance that we can correct for them,”Attar tells TechCruch. “It’s an iterative process but we did kick start development of a custom dedicated software tool to co-optimize lens parameters and neural network variables.”

LDV Capital, one of Glass Imaging’s investors who recently led a seed funding round of $2.2 million, says that the process can revolutionize the power of tiny camera modules. The plan is to use a combination of this innovative approach to hardware along with computational photography and artificial intelligence to make photos taken on smartphones markedly better.

“By rethinking the optical system from the ground up to be tailor-made for smartphones, we managed to fit huge CMOS sensors that collect around nine times more light than traditional designs,” Attar says in a blog post on LDV Captial’s website. “Our state-of-the-art AI algorithms seamlessly correct all the distortions and aberrations and as a result, smartphone image quality is radically increased, up to 10x.”

Solid Proof of Concept

In a proof of concept, Glass Imaging compares its approach to photos that are produced with an iPhone 13 Pro Max. The results show a stark improvement in quality across every visible metric.

![]()

![]()

![]()

Ziv and fellow Glass co-founder Tom Bishop met at Apple and have years of experience developing multiple patents on computer vision and computational photography.

“At Glass Imaging, we aim to offer similar image quality of bulky professional DSLR and mirrorless cameras — ultra-high-resolution detail, dynamic range, low-light clarity, color and tone accuracy — but in a dramatically smaller, thinner form factor,” Bishop says.

Several companies have promised improvements to smartphone image quality using a variety of techniques, but few have provided solid evidence of making good on those promises. The example images provided by Glass Imaging do indicate the company’s idea can work, and time will tell if any smartphone manufacturer will partner with the company to bring an example of it to market.