DALL-E vs Photoshop: Which is Better for Extending Photos?

One of the best features of Adobe Photoshop’s new Generative Fill tool is extending a photo beyond its original borders. But how does it stack up against DALL-E’s Outpainting?

OpenAI’s DALL-E is primarily an image generator, allowing users to create any picture they want by typing in a prompt. The Outpainting feature has been available since September last year.

Photoshop’s Generative Fill has been creating a big buzz recently, both programs use generative artificial intelligence (AI) to extend a photo — but which one does it better?

In this test, we will give each program the same source photo and the same amount of empty pixels to generate into. The source images are framed in white, everything outside of that is AI.

PetaPixel is operated by a group of talented photographers (If I don’t say so myself) so we have drawn upon that wealth of artistry for the source photos.

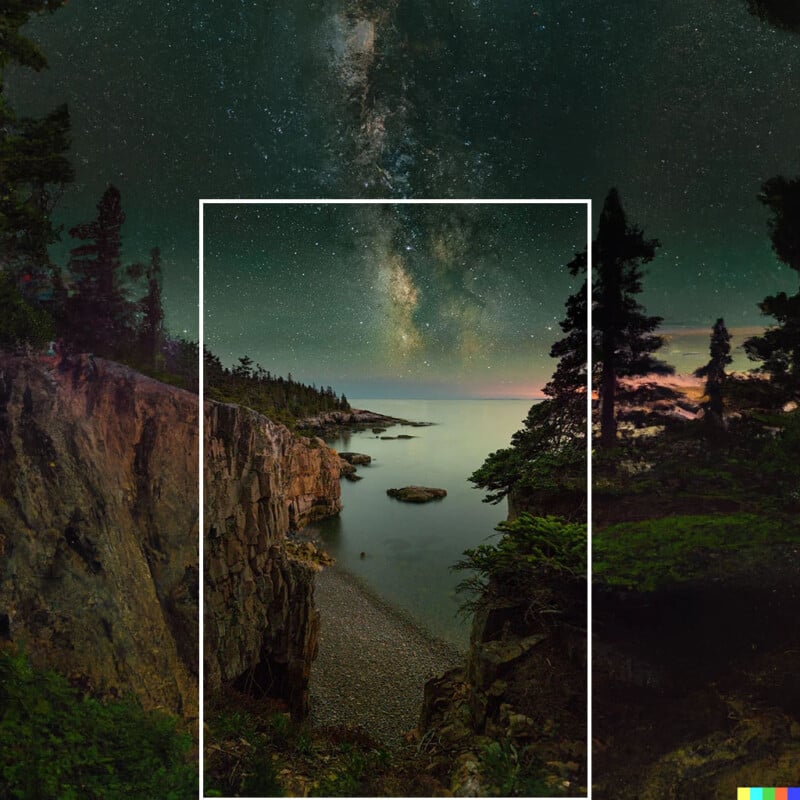

Nighttime

First up, we wanted to test a starry nighttime scene with the Milky Way Galaxy. Taken by PetaPixel’s multi-talented Jeremy Gray in the serenity of Maine — there was a clear winner.

Photoshop’s Generative Fill has done an awful job here. With visible lines and some very strange-looking galaxies at the top left of the image, this brings new meaning to “Photoshop fail.”

DALL-E has done a far better job, extending the image seamlessly. That’s first blood to OpenAI.

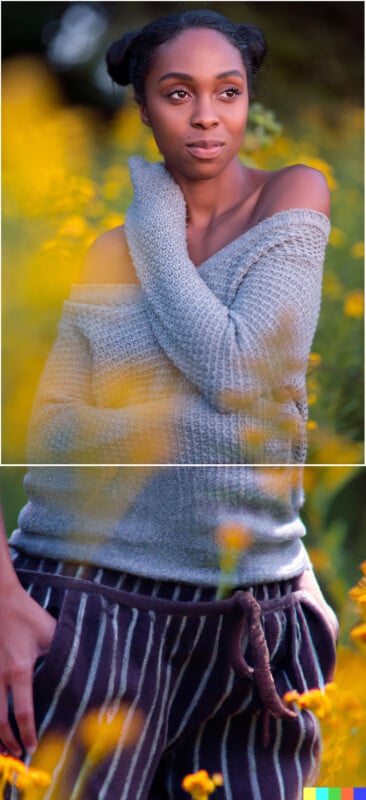

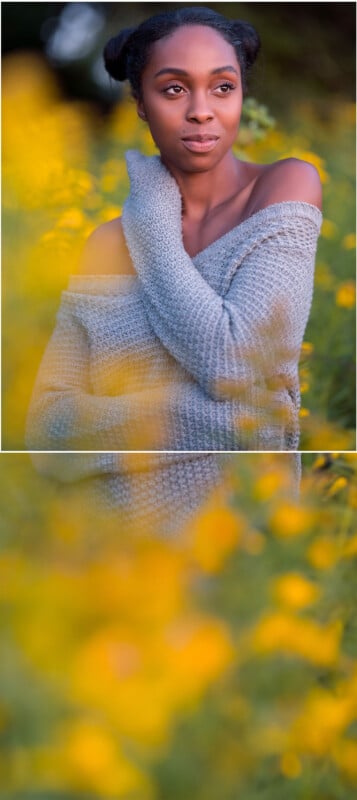

Portrait

Next up, it’s extending a portrait. This may be the most popular use of these tools, how well can generative AI extend a picture of a person?

After it did so well in the first test, DALL-E really let itself down on this with a preposterous image that bears scant relation to the source photo.

Photoshop on the other hand has come out with flying colors. In fact, our in-house superstar photographer Jeremy Gray who took the original photo says that it’s “exactly what the scene looked like outside the frame.

Photoshop’s Generative Fill has really stepped up to the mark here. But as for DALL-E, why is there a random hand slipping into the pajama pocket? Deeply unsettling.

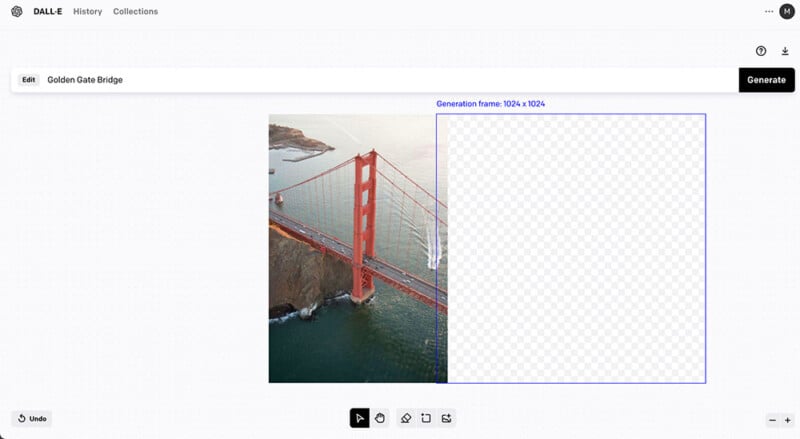

Architecture

Did you know that before becoming Editor in Chief of PetaPixel, Jaron Schneider hung out of helicopters nailing iconic photos like this of the Golden Gate Bridge in San Francisco? Well he did, and complex architecture is a difficult test for generative AI.

This was always going to be a tough one and unsurprisingly both programs have performed poorly. Photoshop seems to have done better with color and tone but DALL-E may have done better generating the actual structure of the bridge. Both images are poor and unprofessional.

Landscape

From difficult to easy, generative AI should perform well when expanding daytime landscape photos such as this one your writer took last weekend while paragliding.

Like the architecture photo, there is not much to choose between them but this time it’s because they’ve both done a great job. However, upon closer inspection, I think Adobe wins because the image beyond the source photo is sharper and more cohesive than OpenAI’s offering.

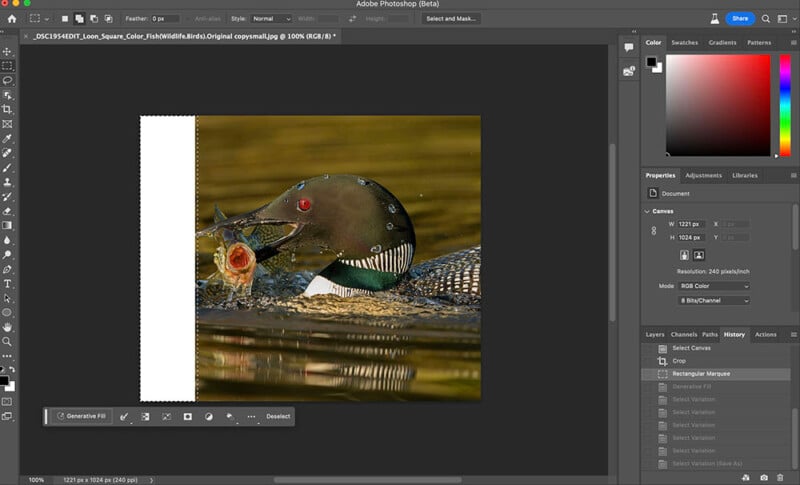

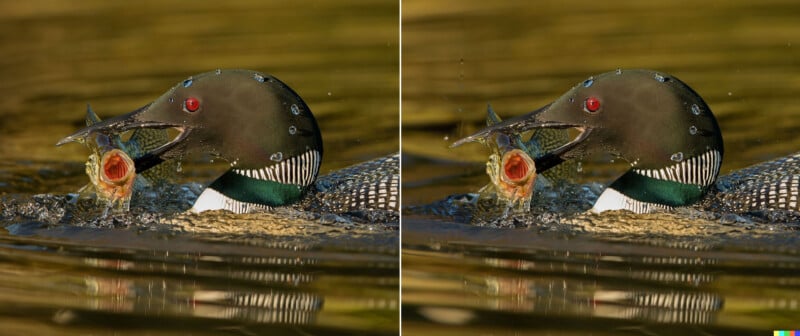

Wildlife

When PetaPixel’s Jeremy Gray took this amazing photo of a common loon gobbling a fish he was in a kayak zoomed out as much he could. It’s bothered him for years that the bird’s beak was cropped out. Let’s see if we can fix it.

While the DALL-E effort is half-decent, Photoshop has really excelled here. This is a great example of how AI tools can help out photographers when something’s gone slightly awry during a shoot.

“My photo..it’s saved,” adds Jeremy. “I’ve waited so long for this.”

Which is Better? Photoshop or DALL-E?

This is not a comprehensive test, it’s a fun snapshot of what these programs are capable of, and better editors than me could no doubt do a better job.

That said, Photoshop Generative Fill has clearly defeated DALL-E’s Outpainting.

Where DALL-E has really let itself down is in the last two photos where both programs have done a good job, but Photoshop has done the more professional job.

DALL-E’s Outpainting is still an incredible tool and I wouldn’t put anyone off using it. But Photoshop also has the advantage of unlimited generations if you have an Adobe subscription. DALL-E uses a credit system and I used up my free credits for this experiment but if I wanted to continue using the program I would have to pay for extra credits.