Gravity Telescope to Use Sun as Giant Lens to Capture Distance Planets

![]()

A pair of Stanford University astrophysicists have proposed a new way to use gravitational lensing that would be up to 1,000 times more precise than current methods. The hope is to use the concept to take better photos of distant planets.

While astronomers have discovered more than 5,000 planets orbiting other stars in the galaxy, the first of which was discovered in 1992, not much can be done after the point of discovery. As Stanford News explains, while scientists are aware of the planet, the limits of current observation technology mean that aside from a few features, details on these discoveries remain a mystery.

Astrophysicists Alexander Madurowicz and Bruce Macintosh have proposed a way to leverage gravitational lensing to capture better photos of exoplanets than current methods in a paper published in The Astrophysical Journal on May 2.

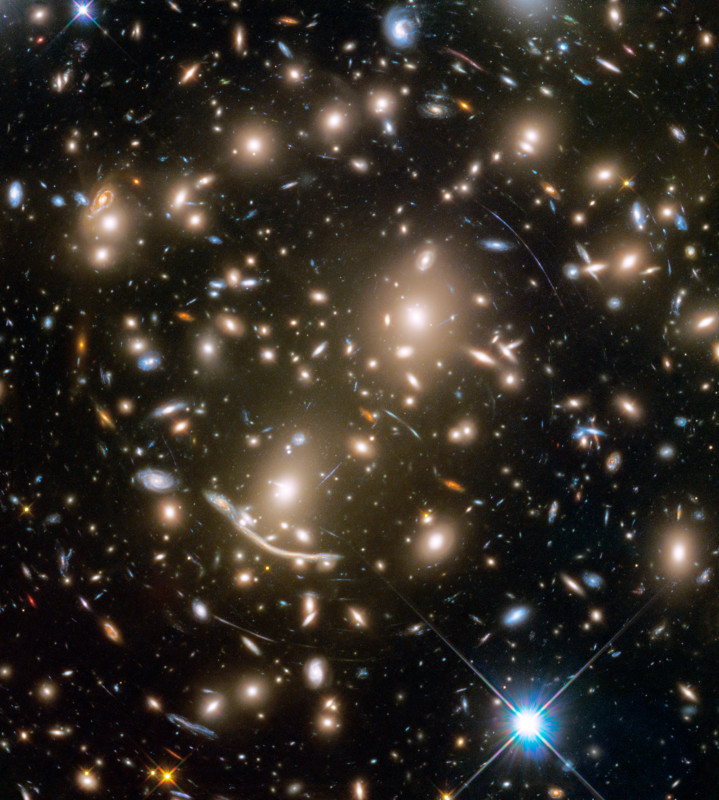

Gravitational lensing is a technique that takes advantage of gravity’s ability to bend space-time and allows scientists to see vast distances. As NASA explains, extreme gravity can create interesting visual effects that current telescopes can detect.

In short, gravitational lensing is an effect that was theorized by Einstien, which sees gravity distort space and create an “optic” of sorts that channels light in a way that scientists describe as akin to a giant magnifying glass. A gravitational lens can occur when a huge amount of matter, like a large cluster of galaxies, creates a gravitational field that distorts and magnifies light from distant galaxies that are behind it, but in the same line of sight.

To date, most gravitational lensing images are generated using massive celestial bodies to bend space-time, but the Stanford astrophysicists want to use the Sun as the large gravitational force which would allow them to focus on planets that are too far away to see with any other method.

“We want to take pictures of planets that are orbiting other stars that are as good as the pictures we can make of planets in our own solar system,” said Bruce Macintosh, a physics professor at in the School of Humanities and Sciences at Stanford and deputy director of the Kavli Institute for Particle Astrophysics and Cosmology (KIPAC).

“With this technology, we hope to take a picture of a planet 100 light-years away that has the same impact as Apollo 8’s picture of Earth.”

The proposed method would position a telescope or camera behind the sun and use its gravity to bend light and focus it over great distances. But since the sun would be the focusing lens, it would also greatly obstruct the object and only allows a small amount of the target to be visible as a halo, seen akin to the ring that can be seen from Earth during a total eclipse. Using an algorithm, the astrophysicists can take that ring and extrapolate it into a complete image of the planet.

![]()

The algorithm can undistort the light from the ring and reverse the bending from the gravitational lens, which turns the ring back into a round planet, Stanford News explains.

In the image above which compares a sharp image of Earth to what would be generated from this gravitational lensing method, important data like the amount of landmass and water mass is quite visible. While the finished image isn’t particularly detailed by most on-Earth photo standards, it’s significantly more information than astronomers currently have and represents a massive leap in data gathering capabilities.

At present, this idea is only theoretical as the proposed technique would require more advanced space travel than is currently available to humans, but Madurowicz and Macintosh strongly believe the concept is worth exploring.

Image credits: Alexander Madurowicz and Bruce Macintosh, via “Integral Field Spectroscopy with the Solar Gravitational Lens”