‘It Devalues Photographers’: Amnesty Deletes AI Images After Backlash

![]()

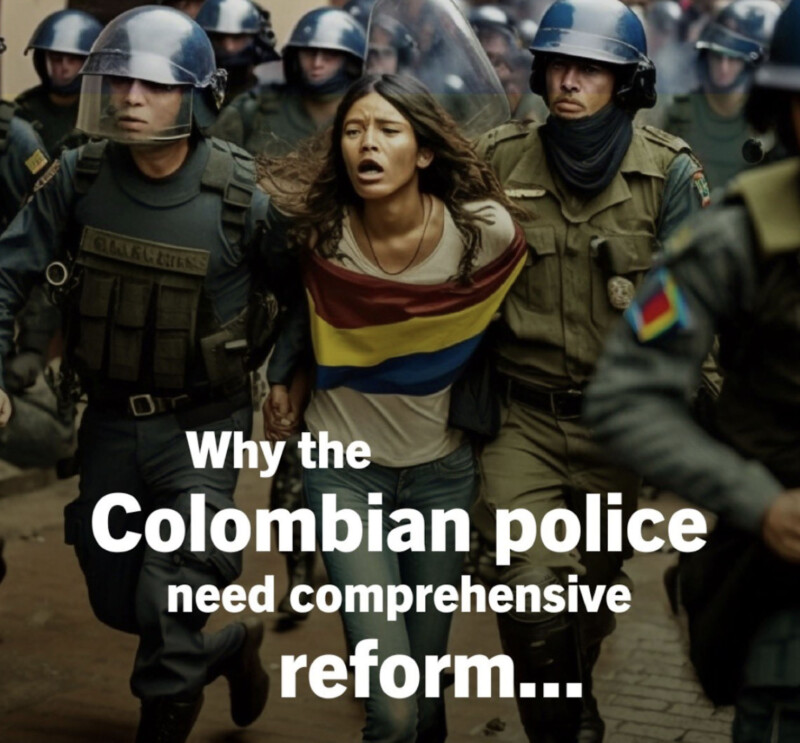

Amnesty International has been criticized for using artificial intelligence (AI) images to depict police brutality in Colombia.

Amnesty Norway has since deleted its tweets containing the images (believed to have been generated from Midjourney) after the move was roundly condemned with one media scholar claiming it “devalues” the work of photographers.

In an email to PetaPixel, Amnesty says that it removed the images to pause and reflect further on the organization’s use of AI.

“We hope that removing the images will help us to raise awareness of the human rights violations committed against protesters in Colombia,” a spokesperson says.

“As we have taken on board the comments and criticism that their use was only distracting from our core message of support for the victims and their calls for justice.”

![]()

What Did the AI Images Show?

The leading global human rights organization posted synthetic images depicting protests and police brutality in Colombia.

Amnesty’s Norway regional account posted the images to acknowledge the two-year anniversary of a major protest in Colombia where a UN report found that police committed “serious” abuses and were responsible for at least 28 deaths.

Defense

Commentators reacted negatively to the images but Amnesty initially defended its strategy telling Gizmodo that it used AI to protect the people present at the protests after consulting with partner organizations in Colombia.

“Many people who participated in the National Strike covered their faces because they were afraid of being subjected to repression and stigmatization by state security forces,” an Amnesty spokesperson tells Gizmodo.

“Those who did show their faces are still at risk and some are being criminalized by the Colombian authorities.”

![]()

Amnesty further pointed out that they added a disclaimer to the bottom of the images noting that the images were synthetic to avoid “misleading” anyone.

Criticism

Critics said that using AI images plays into the hands of authoritarian leaders who will immediately declare a photo or video of human rights abuses as a fake.

“This puts all the pressure on the journalists and human rights defenders to ‘prove real’,” says Sam Gregory, head of WITNESS, a global human rights network focused on video use.

“This can occur preemptively too, with governments priming it so that if a piece of compromising footage comes out, they can claim they said there was going to be ‘fake footage.”

Amnesty International is using AI-generated images to protest police violence in Colombia. While I fully support the cause, I think the means are profoundly wrong. Using computer-generated photorealistic illustrations not only blurs the line between fact and fiction … 1/3 https://t.co/IYsWStyn6A

— Roland Meyer (@bildoperationen) May 1, 2023

Meanwhile, media scholar and author Roland Meyer called the use of AI by Amnesty “profoundly wrong.”

“It also devalues the work of all those brave reporters and photographers who have spent decades documenting human rights violations (and whose images were probably used to train the software used here),” he adds.

Amnesty’s Response

As mentioned earlier, Amnesty says it is reflecting on its use of AI, adding: “Amnesty International does not currently have a policy in favor or against the use of AI-generated issues.”

“However, the organization is cautious when using this. We currently only use it when it is in the interest of protecting human rights defenders.

“Amnesty International is aware of the risk of misinformation if this tool is used in the wrong way. We therefore do not strive to create photo-realistic outputs and indicate on the images that these are generated with AI.

“Before making further use of AI, we will analyze how it can help to further our mission to defend and promote human rights around the world in a manner that avoids creating situations that could detract from our messages and intended aims.

“We take the criticism that we have received seriously and want to ensure we understand better the implications and our role to address the ethical dilemmas posed by the use of this technology.

“As such, we will not use AI-generated images again until we have a clear policy on the matter.”