How Acoustic Cameras Can See Sound

While most photographers are used to capturing what their camera sees, an acoustic camera captures what it hears. Well-known YouTube science personality and educator Steve Mould’s newest video explores acoustic cameras and how they can “see sound.”

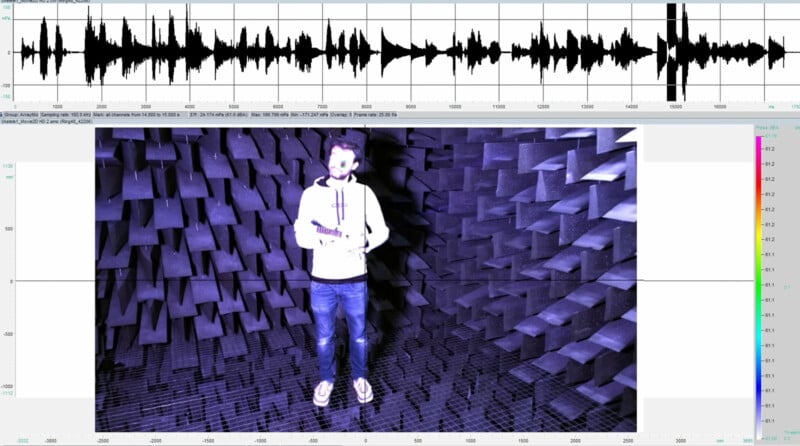

In the video spotted by Laughing Squid, Mould explains that acoustic cameras can show the character of sound alongside spatial information about where sound is located. Acoustic cameras can even work in slow-motion.

Like traditional light-based cameras, acoustic cameras also include dynamic range, which can be a limiting factor. In an example, Mould simultaneously plays the ukulele and speaks.

“It’s interesting that the sound appears to switch between coming from my mouth and coming from the ukulele, even though sometimes both are making a sound at the same time,” Mould says.

Mould observes that this is analogous to traditional cameras because the issue with the acoustic camera involves dynamic range. With a regular camera, the limited dynamic range of an image sensor prevents the camera from properly exposing details in dim shadows and bright highlights simultaneously. An acoustic camera is similarly restricted, as when it tries to “expose” the louder sounds of the ukulele, it reduces its sensitivity and can no longer “see” Mould’s voice.

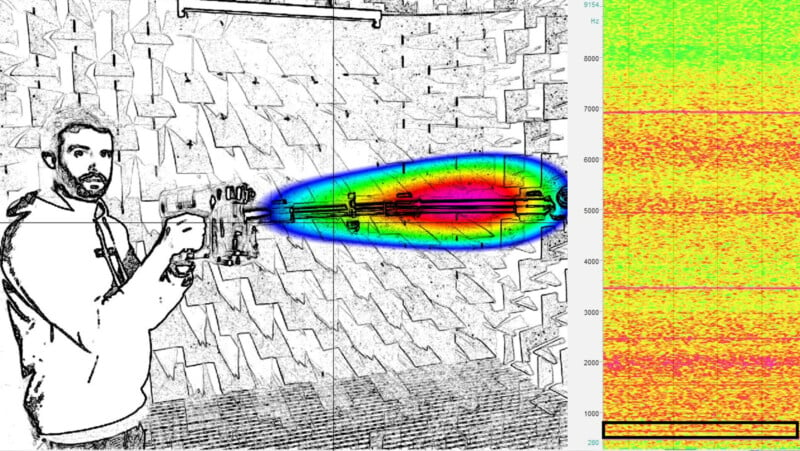

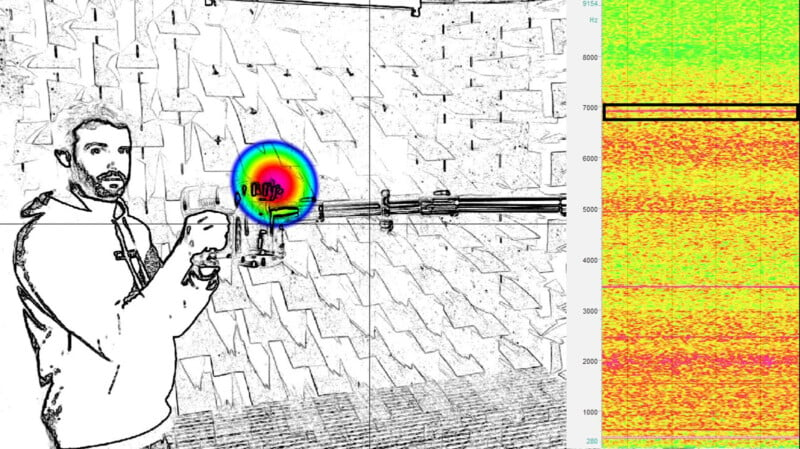

Acoustic cameras can isolate specific frequencies, such as Mould’s voice or the instrument. This alludes to one of the industrial applications of acoustic cameras. While Mould uses a vacuum cleaner, the acoustic camera’s software allows users to isolate specific frequencies and see where that precise sound is coming from. When Mould selected a low frequency, it’s evident that this sound originates from the vacuum cleaner tube, as shown by its bright magenta and red appearance.

While the vacuum tube resonates at around 400 hertz (Hz), the vacuum’s motor resonates at a much higher 7,000Hz frequency. This information is useful when a company is trying to engineer quieter appliances.

The software even allows users to use an eyedropper tool, like what photographers use in Photoshop to select colors and color ranges, to choose specific sound frequencies on an image and isolate it.

Many people helped Mould with his video, including AcSoft and the Birmingham Center for Railway Research Education at the University of Birmingham, which hosted Mould’s acoustic operations. Gfai tech made the acoustic cameras seen in Mould’s video.

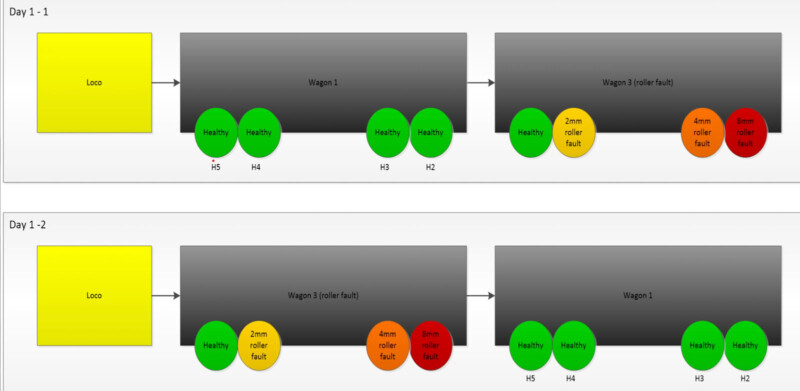

The Birmingham Center for Railway Research and Education is interested in acoustic cameras because an acoustic camera setup alongside railway tracks can “see” which wheels on a train are making what noises. These audible clues allow workers to monitor the health of each wheel, which can ensure proper and efficient maintenance of their trains. Without this type of audio monitoring, the first sign of an issue with wheels often occurs when the train breaks down, which causes delays and increases operational costs.

“One thing that’s insanely cool about acoustic cameras is the framerate,” says Mould. “The typical framerate for a camera is 60 frames per second, that’s what I film my YouTube videos in. But when you record sound, you’re typically sampling at around 40,000 samples per second. When you translate that to an acoustic camera, that’s 40,000 frames per second. So, by accident, acoustic cameras are also high-speed cameras, so you can do things like film what an echo looks like and then play it back in super-slow motion.”

How acoustic cameras work relates to another topic Mould has covered before, interaural time difference and directional hearing. People detect where sounds come from based on the timing of how sound reaches each ear. If there’s a delay, even a very small one, in one ear, that tells someone that a sound is coming from an off-angle direction. There’s a large region of places sound could be coming from, the “cone of confusion.”

The human brain has many sophisticated means of better localizing the origin of specific sounds, which can reduce the cone of confusion. An acoustic camera solves the same issue by “adding more ears,” or microphones. Each pair of new microphones adds a new cone of confusion, and where these cones overlap helps isolate the source of that noise.

Unlike human ears, which work alongside the brain to match frequencies of varying sounds and focus on specific noises, acoustic cameras isolate particular frequencies. Different frequencies include varying optimal distances between mics. By utilizing many mics, an acoustic camera can “see” sound with mic pairings located at different distances to capture a vast range of frequencies with high fidelity.

Some acoustic cameras operate alongside video cameras, allowing companies to capture visual depth information that audio data can then be mapped onto.

![]()

One of Mould’s favorite parts of his deep dive into acoustic cameras was seeing how an echo propagates through space. Hot spots on the screen show how the sound of someone clapping bounces off the surrounding walls and it’s truly incredible to see the echo pattern in super slow-mo.

Image credits: Steve Mould