Robot Vacuum Took Photo of Woman on Toilet That Was Shared on Facebook

![]()

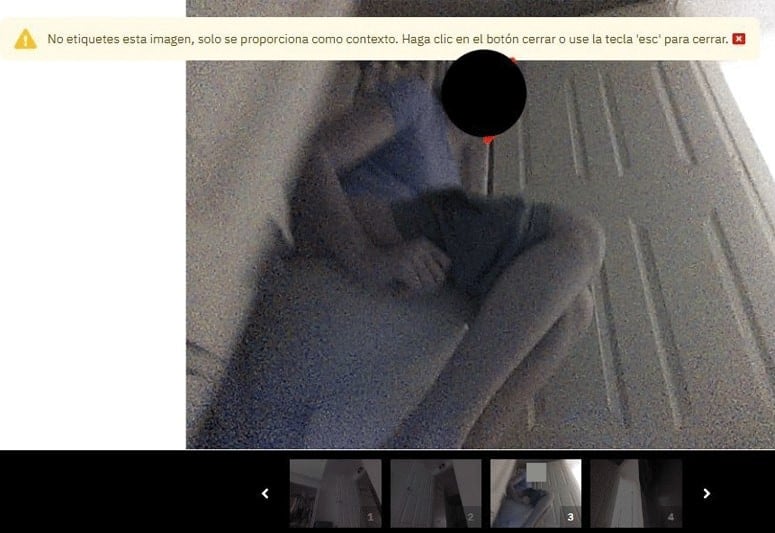

An iRobot Roomba robot vacuum took intimate photographs of a woman on the toilet and a young child that were then shared on Facebook.

According to a report by MIT Technology Review on Monday, the sensitive photos were taken by development versions of iRobot’s Roomba J7 series robot vacuum cleaners in 2020.

The images were then sent to Scale AI, a startup that contracts workers around the world to label audio, photo, and video data used to train artificial intelligence (AI).

From there, the photos were reportedly shared on private groups on Facebook and the chat app Discord by IT workers in Venezuela,

MIT Technology Review obtained 15 screenshots of these private photos, which had been posted to closed social media groups.

In one compromising photograph, a young woman in a lavender T-shirt sits on the toilet, her shorts pulled down to mid-thigh. The woman’s face is blocked in the main image but is unobscured in the scroll of shots below it.

In another image, a boy who appears to be eight or nine years old, and whose face is visible, lies on his stomach across from the household gadget on a hallway floor.

The other pictures show rooms from people’s homes around the world. Furniture, décor, and objects are accompanied by labels like “tv” or “plant_or_flower.”

‘Two Million Images’

A spokesperson for Roomba maker iRobot — which Amazon is in the process of acquiring — confirmed that the images had been captured by its devices.

The company says that the 15 photos that ended up on social media had been among some two million images shared with Scale AI.

However, the spokesperson for iRobot added that the pictures had been taken by “special development robots with hardware and software modifications that are not and never were present on iRobot consumer products for purchase”.

The spokesperson added that the devices had been given to “paid collectors and employees” who had signed written agreements acknowledging that any data collected by the iRobot, including video, could be sent back to the company for training purposes.

The company says that the special test devices had been marked with a sticker that made it clear video recording was in progress and that it had advised the test subjects to “remove anything they deem sensitive from any space the robot operates in, including children.”

iRobot declined to let MIT Technology Review view the consent agreements and did not make any of its paid collectors or employees available to discuss their understanding of the terms.

Flaws

Both iRobot and Scale AI admit that the sharing of images on social media violated their agreements. Scale AI also says that contract workers sharing the pictures breached their own agreements too.

iRobot says the images came from its devices in countries including France, Germany, Spain, the U.S., and Japan.

IT workers had discussed the images in Facebook, Discord, and other online groups that they had created to share advice on handling payments and labeling tricky objects.

This process of recording and labeling images is used to improve a robot vacuum cleaner’s computer vision, which allows the devices to accurately map their surroundings using high-definition cameras and an array of laser-based sensors.

The technology allows the Roomba robot vacuum to determine the size of a room, avoid obstacles like furniture and cables, and adjust its cleaning routine.

The incident reveals the flaws in a system in which huge amounts of data are exchanged between tech manufacturers and third-party companies that help improve their AI algorithms.

The report comes as Amazon is working to close a $1.7 billion agreement to buy iRobot, raising questions about how tech companies use and protect the data they hoover up.

Image credits: Header photo licensed via Depositphotos. Main images sourced from MIT Technology Review