AI Connected to Brain Allows Humans to ‘See’ Around Corners

![]()

Artificial intelligence (AI) can use a person’s brainwaves to see around corners and create images of objects the human eye can not directly see.

Researchers at the University of Glasgow have shown that the computational imaging technique, known as “ghost imaging”, can be combined with human vision to reconstruct the image of objects hidden from view by analyzing how the brain processes barely visible reflections on a wall.

Ghost imaging has been used before to reveal objects hidden around corners and normally involves beaming laser light onto a surface, around a corner and back to a camera sensor, then using algorithms to decode the scattered returned light to identify the object. For the new study, researchers swapped out the camera for human eyes.

Although the researchers previously used human vision in a passive manner to perform ghost imaging, the new work uses the human visual system in an active role by having a person view the light patterns instead of a camera. The brain’s visual response is recorded and used as feedback for an algorithm that determines how to reshape the projected light patterns and reconstructs the final image.

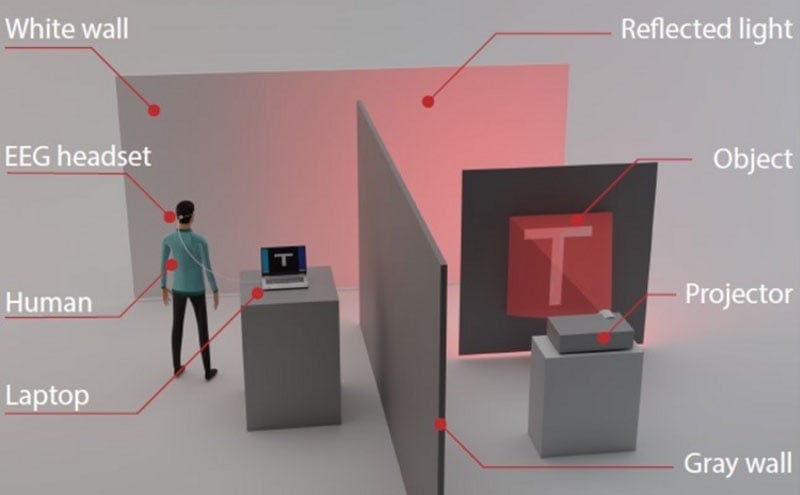

The experiment was set up so that the hidden object was made up of light patterns from a projector cast onto a cardboard cut-out, reports New Atlas. From around the corner, the human participant could only see diffused light on a white wall, which alone wouldn’t be clear enough to make out the original object. This is where the AI component comes in.

The human subjects wore an EEG helmet, which was able to read signals in their visual cortex. These signals were fed into a laptop running AI algorithms which could then decode the scattered light and identify the object. The researchers showed that their technique could successfully reconstruct 16×16 pixel images of simple objects that could not be seen by the observer. They also demonstrated that the carving out process helped reduce the observation time needed for image reconstruction to about one minute.

“This is one of the first times that computational imaging has been performed by using the human visual system in a neurofeedback loop that adjusts the imaging process in real time,” says lead researcher, Daniele Faccio, in a press release for Optica’s Imaging and Applied Optics Congress where the new findings will be presented later this month.

“Although we could have used a standard detector in place of the human brain to detect the diffuse signals from the wall, we wanted to explore methods that might one day be used to augment human capabilities,” adds Faccio.

The new work represents a step toward combining human intelligence with AI. The researchers say that future work will investigate imaging objects in three dimensions, and combining data from multiple viewers at the same time.

Image credits: Header photo licensed via Depositphotos.