How Adobe’s Neural Filters Make Colorizing Photos Way Easier

![]()

A growing trend among retouchers and editors in the last few years has been to repair vintage photographs that have faded and been damaged over time. In addition to this restoration work, “colorizing” vintage photographs has been experiencing explosive growth. Adobe’s “Colorize” Neural Filter now makes the job easier than ever.

While some historians have been vocally against the practice, the colorization of old images and films is most certainly not new, it has just become easier for more people to do well and thus has become far more prevalent. Artists argue that colorizing these old images is meant to “bring the past forward for a modern audience” while those aforementioned historians believe it increases the gap between now and then by creating a difference, rather than immediacy.

In an interview with Wired, Mark Fitzgerald said, “The problem with colorization is it leads people to just think about photographs as a kind of uncomplicated window onto the past, and that’s not what photographs are.”

So the question for creatives really is, even though it is so much easier to do now, should we do it? The discussion is complicated with valid points on both sides of the table. Many creatives who have spent a lot of time “modernizing” old images and film footage like Neural Love say their work makes it easier for modern generations to appreciate since most people don’t have interest in the old original footage.

Regardless of the stance being taken, it is clear a lot of brainpower has gone into creating tools that will make the process of colorization faster and easier to do. It may not be perfect, but with the help of Artificial Intelligence (AI) like Adobe Photoshop’s Neural Filters, the task can be completed much faster than before, which provides a massive shortcut in workflows.

But how accurate is Adobe’s colorization? Would photos taken in the past and given color look anything like they actually would have?

In the GIF below, I took a color photograph from Adak, Alaska at sunset and converted it to black and white in Lightroom. Then, I took that desaturated file into Photoshop to re-colorize it to see how accurate those colors would be interpreted.

![]()

What I found interesting about Adobe’s AI is how it can pretty easily identify grass and foliage as well as skylines and colorize them relatively accurately (if not a bit oversaturated), even in reflections along the water. That said, the filter seemed to struggle with highlights — like the actual sunset.

With colorizing people, I found Adobe’s AI to be fairly impressive and decently accurate, other than the occasional over-saturation present specifically in the lips as you can see in these film scan conversions below.

![]()

![]()

One strange thing I found in my testing was that the colorization neural filter seemed to do better with more complex images. The image below of Billie Holiday took Photoshop just a few seconds to colorize, leaving me with an incredible starting point to perfect on a new layer, effectively saving hours of time.

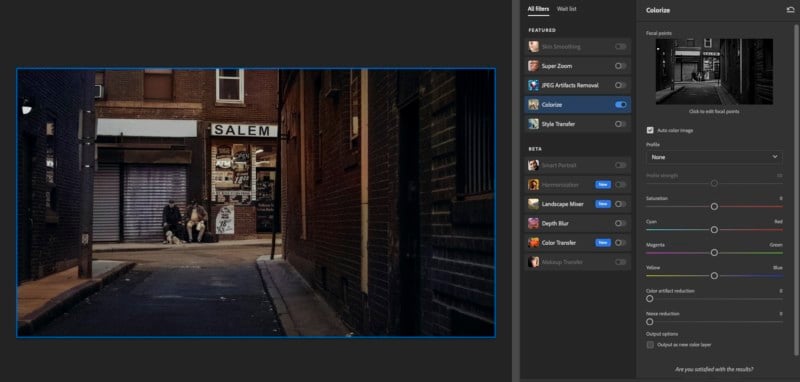

One thing that occurred to me while using the filter was that some creatives may want to use this feature to take a new image captured with the most modern gear and make it look like it was captured on film from the past. As an example, the image below was shot on the Nikon Z6, converted to black and white by just desaturating the image, then loaded into the Neural Filter to re-colorize.

You should remember that with many black and white images, the neural filter will not know what some (or all) of the colors in the image are or should be, leaving that to the discretion and talent of the colorist to adjust. In the set above, the pink and green hues of the model’s sleeves loses that obvious difference in color when shifted to black and white. The result looks like a sleeve with a single color, and that means the filter was left to colorize it assuming a similar hue on each arm. It doesn’t look bad, but it’s not what the sleeve actually looks like. This is something to keep in mind when looking at images from the past. Without knowing what color something really was, assumptions about color — either made by the editor or an AI algorithm — could be very wrong.

For situations when the application doesn’t get the color just right on its own, you can select “focal points” in the original black and white image and manually set the desired color. It can work rather well but will typically have some bleeding issues as the selected color can creep its way into the surrounding pixels. Again, the system is not perfect, but it can be a marvelous starting point and will shave hours of editing time off of a typical colorization workload.

Below are a few more samples I made pretty quickly in Photoshop:

![]()

![]()

![]()

![]()

![]()

![]()

Technically, the colorization neural filter is still just a beta, so according to Adobe, it is not even a finished or polished product yet which, to me, makes what I’m seeing here even more impressive. It’s possible this feature will get even more accurate and robust once the final version is released.

With enough use and user feedback, I could see the AI Filter becoming mind-blowingly accurate in a very short amount of time. As you can see in the samples above, it may not be perfect, but it works surprisingly well and incredibly fast on nearly every image thrown at it, which I think makes it a fantastic tool, especially for those looking to add color to old black and white images.

Neural Filters are available for free in Photoshop as part of the Creative Cloud subscription.