US Man Faces 70 Years in Prison For Creating 13,000 AI Child Abuse Images

A U.S. man has been charged by the FBI for allegedly producing 13,000 sexually explicit and abusive AI images of children on the popular Stable Diffusion model.

A U.S. man has been charged by the FBI for allegedly producing 13,000 sexually explicit and abusive AI images of children on the popular Stable Diffusion model.

An investigation into a controversial AI image generator that is allegedly used to make pictures of "child pornography" has led to it being dropped by its computing provider.

Google flagged photos that a concerned father took of his sick child as child sexual abuse material (CSAM) and sparked a police investigation after it reported him.

A cache of child sexual abuse photos has been allegedly shown to TikTok moderators during training. This is according to workers who say they were granted insecure access to illegal photos and videos.

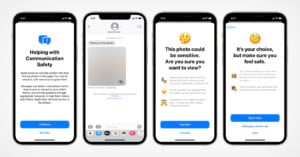

Apple has released iOS 15.2, and at least one photo-centric child protection feature is included: nudity detection for photos sent in texts. Other safety features are still on hold.