When an AI-Generated Taylor Swift Swindles Social Media Users, Who is to Blame?

![]()

There have been numerous examples in the news recently about how generative AI can be used to violate or scam people. Taylor Swift and her fans are the latest victims of deceptive AI practices.

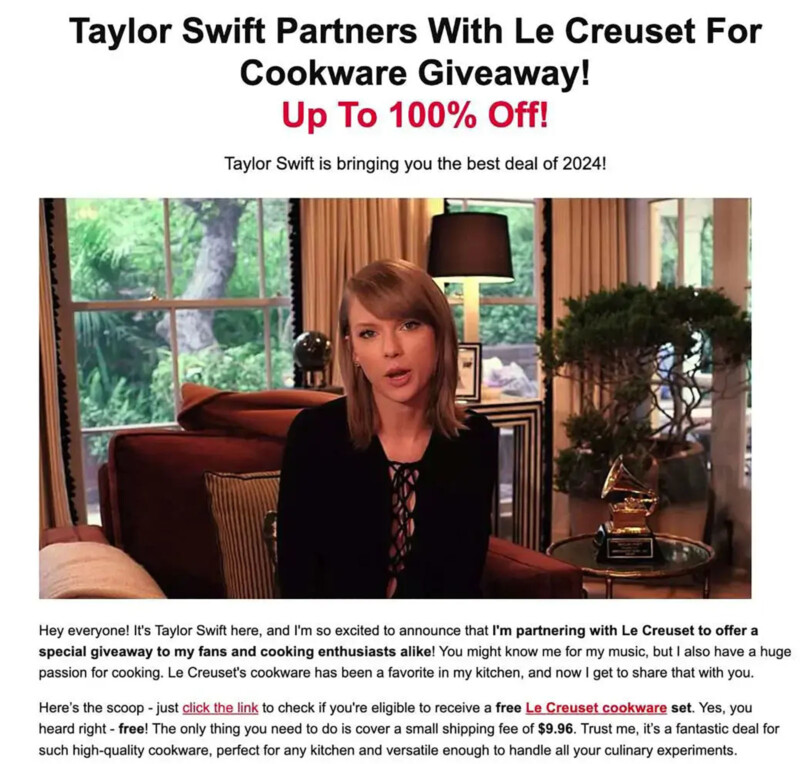

Although a real fan of Le Creuset cookware, Swift did not hawk or endorse the company’s products in recent online advertisements. As the New York Times reports, ads appearing on Facebook and other Meta services have shown real video of Taylor Swift combined with genuine footage of Le Creuset products alongside AI-generated audio of Swift’s voice.

Swift herself is not the only victim here, as Le Creuset itself says it is not involved with the ads, which describe a giveaway for the cookware. Users are asked to participate in a survey to enter the contest, and the French cookware company says it has nothing to do with the survey, purported contest, or misuse of Swift’s likeness.

AI did not usher in fake celebrity ads like this; they have been around for decades. However, AI has made it significantly easier for scammers to steal someone’s likeness, whether it is a photo, audio, or even video. Generative video technology is rapidly improving, so this new avenue will soon be open to online liars, too, joining existing still image and audio fakery.

As The Times explains, “Over the past year, major advances in artificial intelligence have made it far easier to produce an unauthorized digital replica of a real person.”

Dr. Siwei Lyu, a computer science professor at the University of Buffalo, told The Times that the Le Creuset scam ads were likely made using an AI text-to-audio service, which can create an AI-generated voice someone can easily sync to existing video with a separate lip-syncing app. “It’s becoming very easy, and that’s why we’re seeing more,” the professor explains.

Last April, the Better Business Bureau issued an alert concerning AI celebrity impersonations, writing, “You see a post on social media of a celebrity endorsing a weight loss product, health supplement, or another product. In the post, photos show the celebrity using the product, or a video features their voice talking about the amazing results they’ve seen. It sounds too good to be true, but the photos and video look so real! Also, the social media account appears to belong to the celebrity.”

The Better Business Bureau it’s necessarily a perfect organization, but it may be right in this case.

While the increasingly convincing nature of these scams are indeed problematic, perhaps just as bad, if not worse, is that many appear in public ad databases for social media websites and can even inadvertently get labeled as “sponsored” content, lending unwarranted credibility to the fake ads. This means that not only are the frauds themselves convincing, but the very nature in which the content is displayed makes it seem more legitimate.

These problems are not limited to celebrities and fake giveaways, scammers are also using AI-generated likenesses to convincingly present as real people calling from financial institutions. People are using AI likenesses to trick people into sending sexually explicit content, which is then used to blackmail someone, which has had deadly consequences. Fraudsters have used fake celebrities to sell dubious products, including medical supplements, that are not adequately regulated and pose unknown health risks. The list of potential and very real harm goes on and on.

Meta and TikTok claim to have policies in place for their part in propagating synthetic and deceptive ads. Meta has review systems in place for ads, of course, but content frequently skirts these systems before eventually garnering enough attention they are taken down. TikTok says that ads using “synthetic” media with a celebrity requires consent — not that this did much in the recent case.

There aren’t specific federal regulations surrounding the use of AI in scams, either, which is something the government hopes to fix. Of course, some arms of the government are reportedly happy enough to use deepfake technology themselves.

Ultimately, the latest AI-fueled scam highlights the significant shortcomings in how content proliferates relatively unchecked by the social media platforms where much of the fake content lives. It seems clear that companies like TikTok and Meta are not harmed anywhere near as much by their failure to regulate ads served on their platforms as social media users who fall victim to the ever-more-convincing scams.

Image credits: Header photo licensed via Depositphotos.