Expert Says AI May Pose More Urgent Threat Than Climate Change

![]()

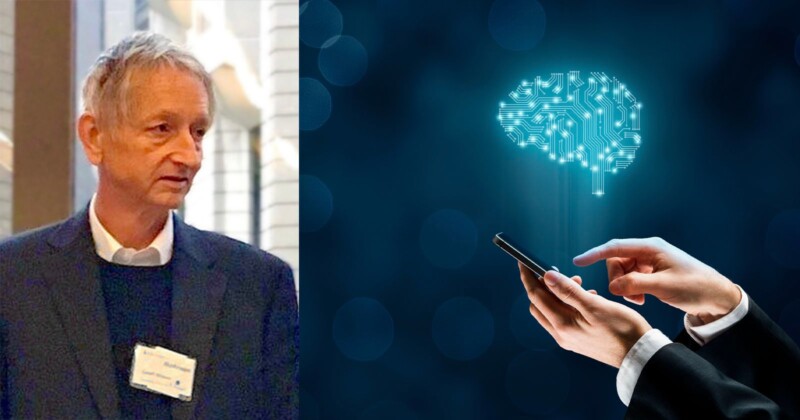

Artificial intelligence (AI) pioneer Dr. Geoffrey Hinton, 75, recently claimed that AI’s threat to the world might be more urgent than climate change.

Hinton spent his career working on neural networks, the mathematical underpinning of the generative artificial intelligence (AI) models that power popular AI systems like Midjourney and ChatGPT. Since his resignation, he has been speaking publicly on the dangers of AI.

In an interview with The New York Times on May 1, Hinton said of AI, “Look at how it was five years ago and how it is now. Take the difference and propagate it forwards. That’s scary.”

“It is hard to see how you can prevent the bad actors from using [AI] for bad things,” he added.

In his lengthy interview with The New York Times, Hinton primarily focused on the potential dangers of AI within the context of proliferating false information and being used to mislead people. He also expressed concern that AI would harmfully disrupt the job market.

In a new interview with Reuters, Hinton expressed the idea that AI may present a more urgent threat to Earth than climate change.

“I wouldn’t like to devalue climate change. I wouldn’t like to say, ‘You shouldn’t worry about climate change.’ That’s a huge risk, too. But I think this might end up being more urgent,” Hinton tells Reuters about AI technology.

“With climate change, it’s very easy to recommend what you should do: you just stop burning carbon. If you do that, eventually things will be okay. For this it’s not at all clear what you should do,” Hinton adds.

AI technology is rapidly evolving, and it’s proven very difficult for governments to keep pace to deal with AI’s potential dangers. Last October, the White House released a blueprint containing five principles that it believes should guide the design, use, and deployment of automated systems to protect Americans in the age of AI. In the just over seven months since releasing “The Blueprint for an AI Bill of Rights,” AI technology has changed in incredible ways.

More recently, Vice President Kamala Harris and top government officials met with chief executives from Google, Microsoft, OpenAI, and Anthropic at the White House last week. On the agenda was discussing the dangers of AI.

Following the release of OpenAI’s GPT-4, many AI experts signed an open letter recommending a six-month pause on the training of AI systems more powerful than GPT-4, citing concerns about how contemporary AI systems might negatively affect humanity at large. The letter claims, “Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable.”

![]()

Last month, the same group of experts from Future of Life published a paper outlining different policy recommendations concerning the governance and regulation of AI technology and its development.

“We have a perfect storm of corporate irresponsibility, widespread adoption, lack of regulation and a huge number of unknowns. [FLI’s Letter] shows how many people are deeply worried about what is going on. I think it is a really important moment in the history of AI – and maybe humanity,” writes Gary Marcus, Professor Emeritus of Psychology and Neural Science at New York University, Founder of Geometric Intelligence.

Other experts expressed concerns about a lack of oversight, and a competitive environment that encourages increasingly risky and dangerous behavior by AI technology developers.

“Those making these [AI systems] have themselves said they could be an existential threat to society and even humanity, with no plan to totally mitigate these risks. It is time to put commercial priorities to the side and take a pause for the good of everyone to assess rather than race to an uncertain future,” says Emad Mostaque, Founder and CEO of Stability AI.

Among the recommended policies are mandates requiring third-party auditing of specific AI systems, regulation of access to computational power, establishing AI agencies at the national level, establishing liability for AI-induced harm, and more.

Hinton disagrees that research should be paused, despite agreeing that AI may represent a significant threat to humanity.

“It’s utterly unrealistic. I’m in the camp that thinks this is an existential risk, and it’s close enough that we ought to be working very hard right now, and putting a lot of resources into figuring out what we can do about it,” Hinton says.

“The tech leaders have the best understanding of it, and the politicians have to be involved. It affects us all, so we all have to think about it,” he continues.

Given AI’s staggering evolutionary speed, whoever isn’t thinking about AI by now will likely be forced to reckon with it very soon.