An Introduction to Aspect Ratios and Compositional Theory

![]()

Here’s a primer for beginning photographers on the concepts of aspect ratios and compositional theories.

- 1:1 – Square format, traditionally the realm of 6x6cm Hasselblads, and now popularized by various mobile apps.

- 5:4 – Large format and sheet film cameras, mainly 8×10″.

- 4:3 – Broadcast television and video used this aspect ratio, originally in 640×480 pixel resolution; small sensor cameras and compacts (which inherited early video CCD architecture) have been using this aspect ratio ever since. Four Thirds and Micro Four thirds are the larger consumer formats to use it; in medium format there’s also 645 which has the same aspect ratio for both film and digital.

- 3:2 – Double a movie frame; famously invented when Oskar Barnack rotated the film through 90 degrees and doubled the width of the frame to create the 24x36mm ‘full frame’ 35mm camera format. Almost all larger sensored DSLRs use this today.

- 16:9 – HDTV format; not a native aspect ratio for digital still cameras, but useful to provide a more cinematic feel to an image.

- 2.35/2.40:1 – Motion picture widescreen for feature films; very rarely used for still photography, and there are certainly no dedicated digital still cameras that offer exclusively this format. Not only is it extremely wide, if you’re cropping down from a 4:3 sensor you’re throwing away more than half of your image.

Most modern cameras offer different image sizes in-camera, though all they really do is crop the top and bottom or sides. There are a few digital cameras that have sensors bigger than the lens’ image circle, which allow the diagonal angle of view for a given focal length to be maintained when changing crop; the main one of these is the Panasonic LX series of cameras.

Put one of these on a tripod, slide the aspect ratio switch on the lens barrel and you’ll notice that the horizontal field of view gets wider than the 4:3 option, even though this is the native aspect ratio of the sensor. (It also means that you don’t suffer as much of a resolution decrease as you’d expect when changing aspect ratios).

There is no point in shooting in another aspect ratio if all the camera does is throw away the extra pixels; you’re better off capturing as much information as you can at the time of shooting and then deciding later what crop would work best (assuming, of course, that you didn’t compose correctly at the time.)

Now that’s out of the way, the aim of this article is to focus on understanding the compositional impact of different aspect ratios, and more importantly, how to pick the right aspect ratio for a given subject.

There are two ways to go about this – either you shoot only one aspect ratio (for instance 3:2 because you have a digital SLR) and arrange the contents of your frame around it, or you keep an open mind and match the aspect ratio to respect the subject and the dynamic of your composition.

For instance, you might use a 1:1 square for a round object if you want a balanced frame, or you might use 16:9 and just focus on one curve if you want to highlight a particular detail in a cinematic manner – you never see movie shots showing the whole of Earth, for instance; it’s always a hemisphere with the sun rising in just the right place. This is not a coincidence!

Most people will stick to the aspect ratio that is native to the camera, and either do nothing else, or crop to fit later. This is compositionally very, very sloppy – not only do you not get the best frame for the shape of your subject, there’s a very good chance that you probably won’t be able to fill the frame properly, either; 3:2 is a bit of a compromise aspect ratio that lacks the organic intimacy of 5:4 or 4:3 for portraits, or the drama of 16:9 for more expansive scenes.

If you crop to fit the subject exactly, then chances are you’ll probably also land up with a very boring frame – this time, because the composition is too balanced. It seems counterintuitive, but the reality is that such compositions tend to use the space around the subject as a frame, and nothing else; there is no context, secondary subjects or points of interest added.

Don’t despair too much if this situation seems a bit damned if you do, damned if you don’t. The good news is that the one obvious remaining strategy usually works the best if done correctly: learn to fill your frame. For the most part, this is how I operate unless I know the final output (usually client work) is going to be of an certain aspect ratio, or if the scene itself just cries out for a certain shape.

What does filling the frame entail? Well, for one, you should be able to identify your subject – that’s composition 101. The remaining space should be filled with elements that either echo your subject, strengthen the story, or give the subject context. But in no way should they distract the eye from the primary subject. And each smaller element should be placed in the remaining space in a balanced manner.

Here’s a visual example, using dots to represent compositional elements. The big red circle is the subject. The lighter color/lower saturation dots are the secondary, tertiary and other filler.

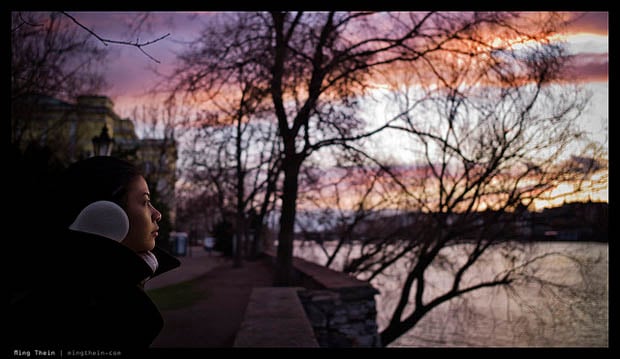

First we place the main subject; where exactly in the frame is up to you, but in general, you leave leading space in front of the subject’s orientation if you want to create expectation (i.e. if a person is in profile, then put most of the space in front of their nose); trailing space if you want to create urgency or drama; or alternatively, let the supporting background elements dictate where to put the primary subject. For this example, let’s just pick an arbitrary starting location – all dots look pretty much the same. I’m going to pick 3:2 as our example aspect ratio.

![]()

Now the secondaries – put them in the white space, with balanced space around them to create both an isolating/highlighting frame, and a buffer zone between them and the main subject so they don’t get confused or overlapped:

![]()

And the same for the tertiaries.

![]()

The other filler rests wherever it rests, but just make sure that distracting elements – say a huge point highlight like the sun, in this case, the blue dot – don’t go in distracting places.

![]()

There – a balanced composition. There isn’t any odd empty space that doesn’t have an equivalent mirror along a given horizontal or vertical (or for that matter, any other orientation) axis. Let’s try this again for 1:1 and the same size original subject:

![]()

And finally, 16:9:

![]()

Notice how for the 16:9 example I didn’t show the whole subject; you don’t always need to. Just so long as you can identify what the subject is, you’ll be fine. In fact, it’s quite easy to imagine this particular frame as say a sunrise over the edge of earth (using our previous example) with a fleet of spaceships in the foreground…

(Oops, I’m getting carried away. Perhaps I should take up modern art, or perhaps designing colorblindness tests.)

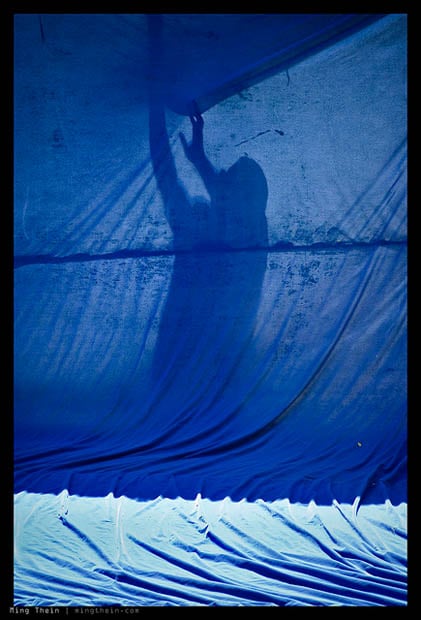

More seriously, there’s a secondary subject intersecting the main subject – does it matter? Only if the intersection causes some perceived division of either, e.g. a horizontal line running through somebody’s head would tend to suggest decapitation to a view, and consequently looks rather odd for a portrait. However, there are also examples where such intersections or juxtapositions can create interesting images in their own right.

Notice how each composition is balanced, but you still instantly know what the main subject is.

Want to make this exercise more realistic? Okay, let’s go back to 3:2 and do one for wide angle, with emphasized perspectives and huge relative size differences in dots:

![]()

And another one for telephoto, with the foreground and background dots blurred to replicate shallow depth of field:

![]()

Once again, notice how your eye is still drawn to the main subject in each composition. Of course, real life isn’t quite this easy; subjects aren’t dots, the white spaces aren’t always uniformly white, and most of all, you almost never have any control over where to put anything else except your main subject; most of the time it’s about waiting for the secondaries to move, or you moving (for immobile subjects) to get the vantage point that works. The only time you have full control is when you’re shooting still life in a studio.

One interesting thing I’d like to bring up at this point is our very restricted use of verticals – almost always, verticals are in more square aspect ratios; 3:2 is about the slimmest vertical aspect ratio you see. You almost never see 16:9 or anything wider; my theory is that it’s both to do with how our eyes natively see, and how content is presented.

Since human eyes are horizontally tandem, we tend to see in a wider horizontal field than vertical; a tall image forces our eyes to scan up and down its length, which means that such images are difficult to compose because they must be broken into zones with complimentary transitions for the composition to work. (The same is not true for horizontals, because we can take in the image at a glance and instantly recognize the surrounding areas as context.)

Making things worse, almost all displays are geared towards this ergonomic trend – as makes sense – when was the last time you saw a vertical monitor? Furthermore, we get subconscious reinforcement through other means that horizontal is the way to go: most cameras only have one grip, and even those that have two make shooting landscapes much easier than portraits; even after we shoot, when we view the images on a monitor, the verticals take up about a third of the space, but the horizontals nearly the entire area – obviously, the larger ones look better, so we tend not to be as influenced by the vertical images…

However, with practice, you’ll learn to see this way – and with more practice, you’ll learn to see this in the instant you identify what you want to have as your main subject; along with lighting, focus, exposure etc. Fortunately, visual pattern recognition is something that human brains are very good at, even for things that have zero fixed quantitative parameters.

For example, we know something is a dog even if it’s in a different orientation, different size, a line drawing or a photograph, or a stylized graphic silhouette. Try programming a computer to do this, and you’ll soon realize both how impossible a task quantifying the distinguishing parameters of a dog is, and what an amazing piece of hardware our brains are. (If you disagree, what marks the difference between a wolf and a dog? Or a dog and a cat? Or a dog and a cow? They’re all four-legged with tails and pointy ears.)

Once again, it comes down to practice. The more you shoot and the more images your brain sees, the faster it becomes at picking out compositions in a live scene that work against ones that don’t. I know that isn’t a concrete answer, but shouldn’t you be out shooting now?

If you enjoyed this post, you can support the author by using this Amazon affiliate link to purchase your gear.

About the author: Ming Thein is a Malaysia-based photographer whose career has spanned fine watches, wildlife, photojournalism, travel, concerts and food. Visit his website here. This post was originally published here.