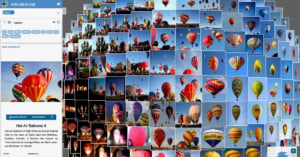

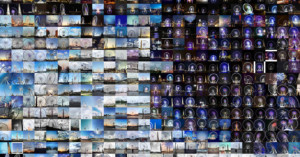

What Famous Photo Spots Would Look Like with Extreme Air Pollution

Despite years of campaigning and government efforts, air pollution is still on the rise around the world. A new series of eye-opening before-and-after photos imagine what iconic photography locations in different countries would look like if pollution levels rise unchecked.