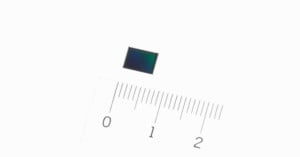

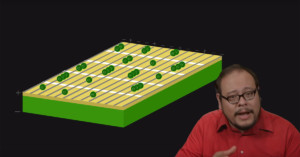

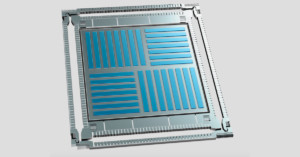

OPPO Unveils the World’s First Sensor-Based Image Stabilizer for Smartphones

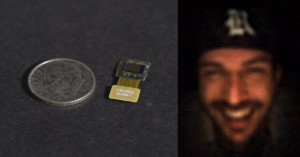

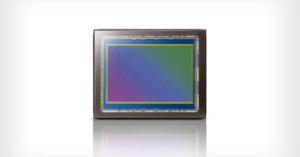

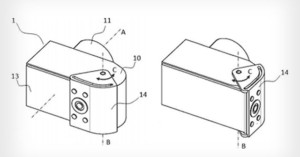

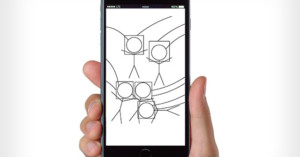

The Chinese electronics company OPPO yesterday announced that they've developed the world's first sensor-based image stabilizer for smartphones. The SmartSensor, as the technology is called, is also the world's smallest image stabilizer across all devices.