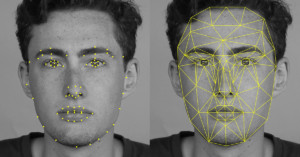

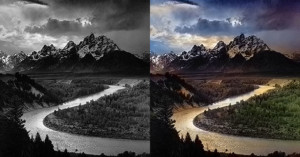

Sneak Peek: Adobe SkyReplace Swaps Out the Skies in Your Photos

Over at Adobe MAX 2016, Adobe gave a sneak peek of a new technology they're brewing called SkyReplace. The feature makes it extremely easy to replace the sky and look of a photo with just a few clicks and zero Photoshop knowledge. You can watch it in action in the 5-minute demo above.