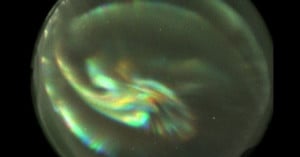

Dual Photography Lets You Virtually Move a Camera for Impossible Photos

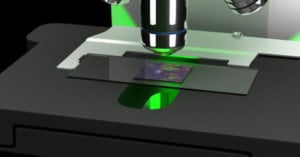

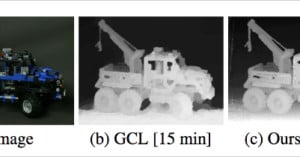

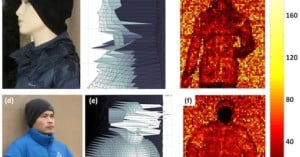

Want to see some mind-blowing research into photography (from the mid-2000s)? Check out the video above about "Dual Photography," a Stanford-developed technique that allows you to virtually swap the locations of a camera and a projector, allowing you to take pictures from the perspective of the light source instead of the camera sensor.