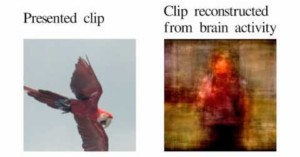

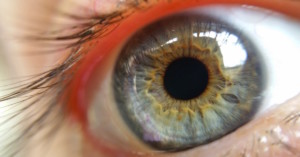

Sony Patents Contact Lens Cam with Zoom, Aperture Control, and More

The future of wearable camera technology is a contact lens. At least, that's what Google, Samsung, and now Sony seem to think. All three have patented their own contact lens cams in the last 2 years.