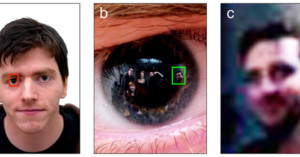

Eyeborg: The Man Who Replaced His Eyeball with a Camera

Rob Spence is a filmmaker who calls himself the "Eyeborg." After losing sight in his right eye at age 9 by incorrectly shooting a shotgun, Spence decided 26 years later to have his sightless eye removed and replaced with a digital camera.