Leica Will Deliver a Mirrorless Medium Format Hybrid Camera ‘Within 2 Years’

Leica's medium format S-system has not seen an update since the S3 debuted in 2018 -- six years is an eternity as far as camera technology is concerned.

Leica's medium format S-system has not seen an update since the S3 debuted in 2018 -- six years is an eternity as far as camera technology is concerned.

The future of Panasonic's organic sensor, a global shutter technology it has been developing with Fujifilm since 2013, doesn't look promising.

It was once common for professional and advanced hobbyist photographers to have small but capable darkrooms in their homes. Often tucked away discreetly in what would otherwise be unused spaces in basements and attics. Serious shooters would process their own film, craft their own prints, and store all the chemistry and idiosyncratic accouterment that one needs to control their own analog adventure.

The 11-20mm f/2.8 Di III-A RXD lens for Fujifilm APS-C cameras that Tamron announced that it was developing last February is finally coming to market at the end of May for $829.

A former Sony camera developer says that part of the company's strategy in the early days of mirrorless was to lull brands like Canon and Nikon into a false sense of security that the new technology wouldn't be as good as DSLRs -- until it was too late.

In a recent interview, Panasonic says that it hopes to begin commercializing its organic CMOS sensor "in a few years," indicating that the sensor first shown in 2013 is still not close to coming to market.

Panasonic has touted additional benefits of the organic CMOS sensor that it has been working on for nearly a decade, and while the developments still sound enticing, the company seems no closer to release.

One of the greatest things about film photography is its friendliness toward do-it-yourself approaches. Want to hack together a working camera out of discount hardware store supplies? All the power to you! Want to shoot on art paper coated in a home-concocted emulsion, contact-printed using authentic techniques from the 1800s? Why not?

Sigma CEO Kazuto Yamaki says that the company doesn't plan to develop any new Micro Four Thirds (M43) lenses due to demand that is "decreasing very sharply."

Tamron is developing the 11-20mm f/2.8 Di III-A RXD (Model B060) for Fujifilm X-mount, which it describes as a lightweight and wide-angle zoom lens.

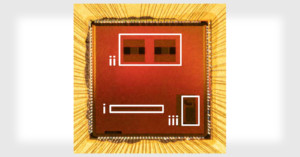

Canon has developed a new sensor that takes a novel approach to high dynamic range (HDR) capture. Rather than taking multiple exposures and blending them into one image, the sensor is divided into sections that each capture its own exposure in a single image.

The digitized, Internet-connected world has actually made film photography easier. As one-hour photo labs began to disappear and many camera stores ditched the darkroom, mail-in photo labs have filled the void.

The process of designing a flagship digital camera is a heavily guarded secret. But in a rare exception, some of the key individuals responsible for the development of the Nikon Z9 sat down and explained some of the defining aspects that brought the camera to life.

The Shenzhen Mingjiang Optical Technology Group, which develops lenses under the TTArtisan brand, has officially joined the Micro Four Thirds Standard Group.

Canon has developed an image sensor that is capable of capturing high-quality color photography even in the dark. The company says that it will be able to shoot clear photos even in situations where nothing is visible to the naked eye.

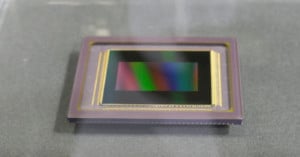

Panasonic claims that its currently in-development Organic Photoconductive Film (OPF) CMOS uses a unique structure that will allow it to achieve high resolution, wide dynamic range, and a global shutter. Basically, it would be a game-changer.

Nikon has announced that it is developing a new 400mm f/2.8 TC VR S prime lens with a built-in 1.4x teleconverter for Nikon Z-mount, which will be the first super-telephoto lens made for Nikon's mirrorless system.

OM Digital has released a new lens roadmap as well as announced the development of two new lenses for Micro Four Thirds (M43), the M.Zuiko Digital ED 20mm f/1.4 Pro and M.Zuiko Digital ED 40-150mm f/4.0 Pro.

Fujifilm has revealed an updated lens roadmap for both the medium format GFX system and its X Series mirrorless cameras.

Tamron has published development announcements for a duo of upcoming lenses. The first is the 28-75mm f/2.8 Di III VXD G2, and the second is the 35-150mm f/2-2.8 Di III VXD. Both will be released for Sony E-mount cameras.

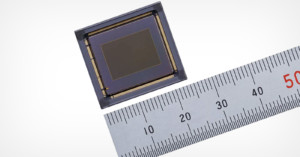

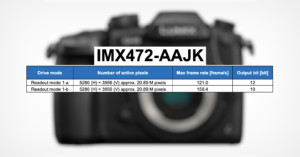

Sony has published a new product information sheet that shows specifications for a new stacked-CMOS 21.46-megapixel Micro Four Thirds sensor that is capable of reading at 120 frames per second across its full width.

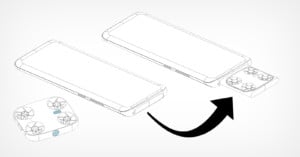

Vivo is reportedly developing a smartphone that includes a tiny quadcopter drone that slides out from the main body of the device, detaches, and can fly away to allow for better selfie photos.

Since Canon's initial development announcement for the EOS R3, rumors have swirled that the company -- despite its statement otherwise -- was not the manufacturer of the backside illuminated sensor at its core. A report published on June 17 stated factually that the R3 sensor is made by Sony, and Canon has responded.

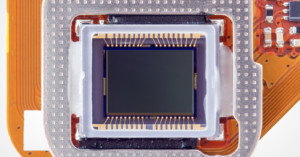

Canon has announced the development of a new professional-focused mirrorless camera called the EOS R3, which will sit between the R5 and the 1DX series. Built for speed, it will feature the first full-frame backside-illuminated CMOS sensor developed by Canon.

Over the past year, it seems the whole world has been on hold due to the rampages of COVID-19. In the Facebook groups I’m in, many users were seeing shortages in analog photography supplies. Some online stores stopped shipping Rodinal (a caustic liquid) and other products were just nowhere to be found.

Tamron has announced the development of the world's smallest and lightest telephoto zoom lens for full-frame Sony E-mount cameras: the Tamron 70-300mm F/4.5-6.3 Di III RXD.

Canon has announced that it has created the world's first single-photon avalanche diode (SPAD) image sensor that's capable of capturing 1-megapixel photos.

Ricoh has announced the development of its third "Star-series lens" and second Prime in the lineup: the HD Pentax-D FA* 85mm f/1.4 SDM AW. Like previous FA* lenses, this 85mm promises to deliver "perfect image quality" and "high quality workmanship" for users of Pentax DSLRs.

Canon has announced the development of the EOS 1D X Mark III, the successor to the 1D X Mark II and a flagship DSLR that has more power, more speed, and more durability.

German researchers have created a new high dynamic range (HDR) CMOS image sensor that features a new pixel design that could pretty much do away with blown highlights.

Last week, Ricoh revealed that it would be releasing a new flagship Pentax APS-C DLSR in 2020, but they shared very few details and only one low-resolution photo of the upcoming camera. Fortunately, photographer Niels Kemp was at the Pentax 100 Years of History event in The Netherlands, and sent us a few first-look photos.

It's a day for development announcements! On the same day as Fuji revealed its upcoming Fujifilm X-Pro3 rangefinder, Ricoh broke its long silence to reveal that it is developing a brand new Pentax flagship APS-C DLSR to replace the KP.

Panasonic has announced the new LUMIX S1H full-frame mirrorless camera, the third L-mount camera after the S1 and S1R announced back in February 2019. The S1H is the world's first digital interchangeable lens camera to offer 6K/24p video recording.

Sony announced today that it has developed a new rewritable film material. Just as rewritable optical discs can be erased and written to again, Sony's new film can have full-color photos drawn onto them, be erased, and then be reused for another photo.

Tamron has just announced the development of two new full-frame DSLR lenses (the 35-150mm F/2.8-4 Di VC OSD and SP 35mm F/1.4 Di USD, and one new full-frame mirrorless lens (the 17-28mm F/2.8 Di III RXD).

Canon has announced the development of six upcoming RF mirrorless lenses: two 85mm lenses, a 24-70mm f/2.8L IS, a 15-35mm f/2.8L IS, a 70-200mm f/2.8L IS, and a 24-240mm f/4-6.3 IS.

Guess who's planning to join the mirrorless camera war? Yongnuo, the Chinese company best known for creating cheap clones of popular Canon and Nikon lenses.

Panasonic has announced the development of the Leica DG 10-25mm f/1.7 for Micro Four Thirds cameras. It's the world's first wide-angle zoom lens with a constant f/1.7 aperture.

Sigma has announced that it's developing a full-frame L-mount mirrorless camera as part of the company's new L-Mount Alliance with Leica and Panasonic. At the core of the camera will be a sensor with Sigma's Foveon technology.

Ricoh has announced the development of the highly-anticipated GR III high-end digital compact camera. It's designed to be "the ultimate street photography camera."