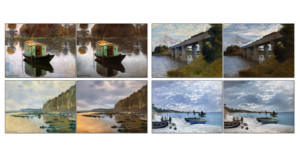

This Algorithm Can Remove the Water from Underwater Photos, and the Results are Incredible

An engineer has developed a computer program that can, in her words, "remove the water" from an underwater photograph. The result is a "physically accurate" image with all of the vibrance, saturation and color of a regular landscape photo.